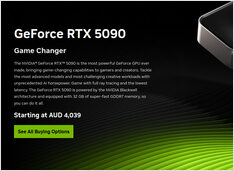

RTX 5090 in Australia March 2026 Pricing Stock Reality

RTX 5090 in AU is scarce and overpriced

Australia has RTX 5090 stock. Barely. And if you find one, you will pay a premium that feels detached from reality.

RTX 5090 in AU is scarce and overpriced

Australia has RTX 5090 stock. Barely. And if you find one, you will pay a premium that feels detached from reality.

Comparison of Chunking Strategies in RAG

Chunking is the most under-estimated hyperparameter in Retrieval ‑ Augmented Generation (RAG): it silently determines what your LLM “sees”, how expensive ingestion becomes, and how much of the LLM’s context window you burn per answer.

Control data and models with self-hosted LLMs

Self-hosting LLMs keeps data, models, and inference under your control-a practical path to AI sovereignty for teams, enterprises, nations.

LLM speed test on RTX 4080 with 16GB VRAM

Running large language models locally gives you privacy, offline capability, and zero API costs. This benchmark reveals exactly what one can expect from 14 popular LLMs on Ollama on an RTX 4080.

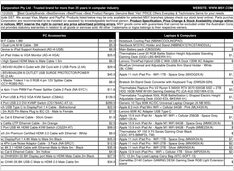

January 2025 GPU and RAM price check

Today we are looking at the top-level consumer GPUs, and RAM modules. Specifically I’m looking at RTX-5080 and RTX-5090 prices, and 32GB (2x16GB) DDR5 6000.

Choose the right terminal for your Linux workflow

One of the most essential tools for Linux users is the terminal emulator.

Real AUD pricing from Aussie retailers now

The NVIDIA DGX Spark (GB10 Grace Blackwell) is now available in Australia at major PC retailers with local stock. If you’ve been following the global DGX Spark pricing and availability, you’ll be interested to know that Australian pricing ranges from $6,249 to $7,999 AUD depending on storage configuration and retailer.

Testing Cognee with local LLMs - real results

Cognee is a Python framework for building knowledge graphs from documents using LLMs. But does it work with self-hosted models?

How I fixed network problems in Ubuntu

After automatically installing a new kernel, Ubuntu 24.04 has lost the ethernet network. This frustrating issue occurred for me a second time, so I’m documenting the solution here to help others facing the same problem.

Short post, just noting the price

With this crazy RAM prices volatility, to form and have a better picture, let’s track the RAM price in Australia ourselves first.

RAM prices surge 163-619% as AI demand strains supply

The memory market is experiencing unprecedented price volatility in late 2025, with RAM prices surging dramatically across all segments.

AI-suitable Consumer GPU' Prices - RTX 5080 and RTX 5090

Let’s compare prices for top-level consumer GPUs, that are suitable for LLMs in particular and AI in general. Specifically I’m looking at RTX-5080 and RTX-5090 prices.

Deploy enterprise AI on budget hardware with open models

The democratization of AI is here. With open-source LLMs like Llama, Mistral, and Qwen now rivaling proprietary models, teams can build powerful AI infrastructure using consumer hardware - slashing costs while maintaining complete control over data privacy and deployment.

Enable GPU acceleration for Docker Model Runner with NVIDIA CUDA support

Docker Model Runner is Docker’s official tool for running AI models locally, but enabling NVidia GPU acceleration in Docker Model Runner requires specific configuration.

GPT-OSS 120b benchmarks on three AI platforms

I dug up some interesting performance tests of GPT-OSS 120b running on Ollama across three different platforms: NVIDIA DGX Spark, Mac Studio, and RTX 4080. The GPT-OSS 120b model from the Ollama library weighs in at 65GB, which means it doesn’t fit into the 16GB VRAM of an RTX 4080 (or the newer RTX 5080).