Llama-Server Router Mode - Dynamic Model Switching Without Restarts

Serve and swap LLMs without restarts.

For a long time, llama.cpp had a glaring limitation:

you could only serve one model per process, and switching meant a restart.

Serve and swap LLMs without restarts.

For a long time, llama.cpp had a glaring limitation:

you could only serve one model per process, and switching meant a restart.

Any-key pause for Bash, CMD, PowerShell, and macOS.

Batch files and shell scripts often need a short wait so a double-clicked window or installer log stays visible. Windows CMD has a dedicated pause command. Unix shells use read.

Serve open models fast with SGLang.

SGLang is a high-performance serving framework for large language models and multimodal models, built to deliver low-latency and high-throughput inference across everything from a single GPU to distributed clusters.

Hot-swap local LLMs without changing clients.

Soon you are juggling vLLM, llama.cpp, and more—each stack on its own port. Everything downstream still wants one /v1 base URL; otherwise you keep shuffling ports, profiles, and one-off scripts. llama-swap is the /v1 proxy before those stacks.

OpenHands CLI QuickStart in minutes

OpenHands is an open-source, model-agnostic platform for AI-driven software development agents. It lets an agent behave more like a coding partner than a simple autocomplete tool.

Self-host OpenAI-compatible APIs with LocalAI in minutes.

LocalAI is a self-hosted, local-first inference server designed to behave like a drop-in OpenAI API for running AI workloads on your own hardware (laptop, workstation, or on-prem server).

How to Install, Configure, and Use the OpenCode

I keep coming back to llama.cpp for local inference—it gives you control that Ollama and others abstract away, and it just works. Easy to run GGUF models interactively with llama-cli or expose an OpenAI-compatible HTTP API with llama-server.

How to Install, Configure, and Use the OpenCode

OpenCode is an open source AI coding agent you can run in the terminal (TUI + CLI) with optional desktop and IDE surfaces. This is the OpenCode Quickstart: install, verify, connect a model/provider, and run real workflows (CLI + API).

Selenium, chromedp, Playwright, ZenRows - in Go.

Choosing the right browser automation stack and webscraping in Go affects speed, maintenance, and where your code runs.

.desktop launchers on Ubuntu 24 - Icon, Exec, locations

Desktop launchers on Ubuntu 24 (and most Linux desktops) are defined by .desktop files: small, text-based config files that describe an application or link.

Python browser automation and E2E testing compared.

Choosing the right browser automation stack in Python affects speed, stability, and maintenance. This overview compares Playwright vs Selenium vs Puppeteer vs LambdaTest vs ZenRows vs Gauge - with a focus on Python, while noting where Node.js or other languages fit in.

Elm-style (Go) vs immediate-mode (Rust) TUI frameworks quickview

Two strong options for building terminal user interfaces today are BubbleTea (Go) and Ratatui (Rust). One gives you an opinionated, Elm-style framework; the other a flexible, immediate-mode library.

Essential shortcuts and magic commands

Jumpstart the Jupyter Notebook productivity with essential shortcuts, magic commands, and workflow tips that will transform your data science and development experience.

Master line ending conversions across platforms

Line ending inconsistencies between Windows and Linux systems cause formatting issues, Git warnings, and script failures. This comprehensive guide covers detection, conversion, and prevention strategies.

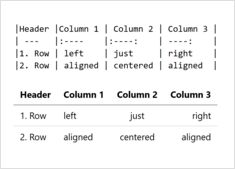

Complete guide to creating tables in Markdown

Tables are one of the most powerful features in Markdown for organizing and presenting structured data. Whether you’re creating technical documentation, README files, or blog posts, understanding how to properly format tables can significantly improve your content’s readability and professionalism.

Cross-distro apps with Flatpak & Flathub

Flatpak is a next-generation technology for building and distributing desktop applications on Linux, offering universal packaging, sandboxing, and seamless cross-distribution compatibility.