RTX 5090 in Australia March 2026 Pricing Stock Reality

RTX 5090 in AU is scarce and overpriced

Australia has RTX 5090 stock. Barely. And if you find one, you will pay a premium that feels detached from reality.

RTX 5090 in AU is scarce and overpriced

Australia has RTX 5090 stock. Barely. And if you find one, you will pay a premium that feels detached from reality.

Compose-first Ollama server with GPU and persistence.

Ollama works great on bare metal. It gets even more interesting when you treat it like a service: a stable endpoint, pinned versions, persistent storage, and a GPU that is either available or it is not.

LLM speed test on RTX 4080 with 16GB VRAM

Running large language models locally gives you privacy, offline capability, and zero API costs. This benchmark reveals exactly what one can expect from 14 popular LLMs on Ollama on an RTX 4080.

Choose the right terminal for your Linux workflow

One of the most essential tools for Linux users is the terminal emulator.

Real AUD pricing from Aussie retailers now

The NVIDIA DGX Spark (GB10 Grace Blackwell) is now available in Australia at major PC retailers with local stock. If you’ve been following the global DGX Spark pricing and availability, you’ll be interested to know that Australian pricing ranges from $6,249 to $7,999 AUD depending on storage configuration and retailer.

AI-suitable Consumer GPU' Prices - RTX 5080 and RTX 5090

Let’s compare prices for top-level consumer GPUs, that are suitable for LLMs in particular and AI in general. Specifically I’m looking at RTX-5080 and RTX-5090 prices.

Unify text, images, and audio in shared embedding spaces

Cross-modal embeddings represent a breakthrough in artificial intelligence, enabling understanding and reasoning across different data types within a unified representation space.

Deploy enterprise AI on budget hardware with open models

The democratization of AI is here. With open-source LLMs like Llama, Mistral, and Qwen now rivaling proprietary models, teams can build powerful AI infrastructure using consumer hardware - slashing costs while maintaining complete control over data privacy and deployment.

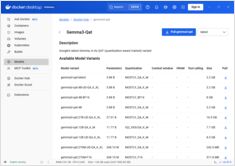

Configure context sizes in Docker Model Runner with workarounds

Configuring context sizes in Docker Model Runner is more complex than it should be.

AI model for augmenting images with text instructions

Black Forest Labs has released FLUX.1-Kontext-dev, an advanced image-to-image AI model that augments existing images using text instructions.

Enable GPU acceleration for Docker Model Runner with NVIDIA CUDA support

Docker Model Runner is Docker’s official tool for running AI models locally, but enabling NVidia GPU acceleration in Docker Model Runner requires specific configuration.

GPT-OSS 120b benchmarks on three AI platforms

I dug up some interesting performance tests of GPT-OSS 120b running on Ollama across three different platforms: NVIDIA DGX Spark, Mac Studio, and RTX 4080. The GPT-OSS 120b model from the Ollama library weighs in at 65GB, which means it doesn’t fit into the 16GB VRAM of an RTX 4080 (or the newer RTX 5080).

Quick reference for Docker Model Runner commands

Docker Model Runner (DMR) is Docker’s official solution for running AI models locally, introduced in April 2025. This cheatsheet provides a quick reference for all essential commands, configurations, and best practices.

Compare Docker Model Runner and Ollama for local LLM

Running large language models (LLMs) locally has become increasingly popular for privacy, cost control, and offline capabilities. The landscape shifted significantly in April 2025 when Docker introduced Docker Model Runner (DMR), its official solution for AI model deployment.

Availability, real-world retail pricing across six countries, and comparison against Mac Studio.

NVIDIA DGX Spark is real, on sale Oct 15, 2025, and targeted at CUDA developers needing local LLM work with an integrated NVIDIA AI stack. US MSRP $3,999; UK/DE/JP retail is higher due to VAT and channel. AUD/KRW public sticker prices are not yet widely posted.

AI-suitable Consumer GPU Prices - RTX 5080 and RTX 5090

Let’s compare prices for top-level consumer GPUs, that are suitable for LLMs in particular and AI in general. Specifically I’m looking at RTX-5080 and RTX-5090 prices. They have slightly dropped.