LLM ASICs and specialized inference chips (why they matter)

ASICs and custom silicon push LLM inference speed and efficiency

The future of AI is not only about smarter models. It is also about silicon that matches how those models are actually served. Specialized hardware for LLM inference is following a path reminiscent of Bitcoin mining’s move from GPUs to purpose-built ASICs, only with harder constraints because models and precision recipes keep evolving.

For more on throughput, latency, VRAM, and benchmarks across runtimes and hardware, see LLM Performance: Benchmarks, Bottlenecks & Optimization.

Electrical Imagination - Flux text to image LLM.

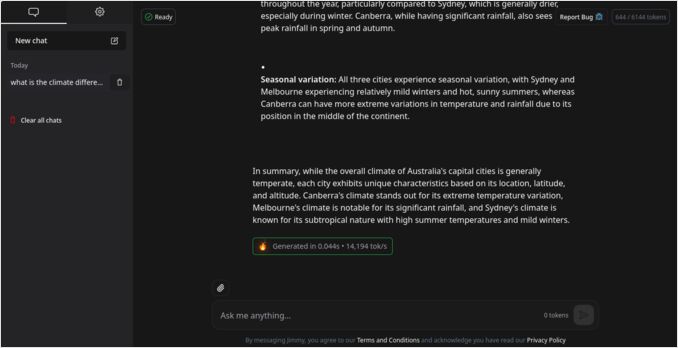

Electrical Imagination - Flux text to image LLM.

Why LLMs benefit from inference-specific hardware

Large language models have transformed AI, but every fluent reply depends on huge, predictable streams of matrix math and memory traffic. As inference spend grows — often overtaking training over a model’s lifetime — chips optimized for serving, not for every possible workload, become economically compelling.

The analogy to Bitcoin mining is imperfect yet instructive. Both are repetitive, well-bounded tasks where stripping unused generality from the die can buy large gains in throughput and joules per useful operation.

What Bitcoin mining history suggests about inference ASICs

Bitcoin mining evolved through four generations:

| Era | Hardware | Key Benefit | Limitation |

|---|---|---|---|

| 2015–2020 | GPUs (CUDA, ROCm) | Flexibility | Power hungry, memory-bound |

| 2021–2023 | TPUs, NPUs | Coarse-grain specialization | Still training-oriented |

| 2024–2025 | Transformer ASICs | Tuned for low-bit inference | Limited generality |

AI is following a similar path. Each transition improved performance and energy efficiency by orders of magnitude.

However, unlike Bitcoin ASICs (which only compute SHA-256), inference ASICs need some flexibility. Models evolve, architectures change, and precision schemes improve. The trick is to specialize just enough — hardwiring the core patterns while maintaining adaptability at the edges.

How LLM inference differs from training (and what chips exploit)

Inference workloads expose patterns that specialized hardware can target:

- Low precision dominates — 8-bit, 4-bit, even ternary or binary arithmetic work well for inference

- Memory is the bottleneck — Moving weights and KV caches consumes far more power than computation

- Latency matters more than throughput — Users expect tokens in under 200ms

- Massive request parallelism — Thousands of concurrent inference requests per chip

- Predictable patterns — Transformer layers are highly structured and can be hardwired

- Sparsity opportunities — Models increasingly use pruning and MoE (Mixture-of-Experts) techniques

A purpose-built inference chip can hard-wire these assumptions to achieve 10–50× better performance per watt than general-purpose GPUs.

Who is building LLM-optimized inference silicon

The inference ASIC market spans incumbents, wafer-scale designs, and startups betting on transformer-shaped silicon:

| Company | Chip / Platform | Specialty |

|---|---|---|

| Groq | LPU (Language Processing Unit) | Deterministic throughput for LLMs |

| Etched AI | Sohu ASIC | Hard-wired Transformer engine |

| Tenstorrent | Grayskull / Blackhole | General ML with high-bandwidth mesh |

| Taalas | HC1 (Llama 3.1 8B product) / HC2 roadmap | Model-specific “hardcore” silicon; merges storage and compute |

| OpenAI × Broadcom | Custom Inference Chip | Rumored 2026 rollout |

| Intel | Crescent Island | Inference-only Xe3P GPU with 160GB HBM |

| Cerebras | Wafer-Scale Engine (WSE-3) | Massive on-die memory bandwidth |

Much of this is already in production data centers, not slideware. Smaller teams such as d-Matrix, Rain AI, Mythic, and Tenet are also pursuing architectures tuned to low-bit inference and structured sparsity.

Taalas HC1, Chat Jimmy, and ultra-fast small-model serving

Taalas is a recent example of the “specialize almost everything” school. The company argues that the memory–compute boundary (off-chip DRAM versus on-chip SRAM) dominates cost, power, and engineering complexity for inference, and that per-model silicon — what they call Hardcore Models — can collapse that boundary when a deployment is willing to fix the weights and graph.

Their first shipping product, HC1, hard-wires a Llama 3.1 8B variant. That choice is pragmatic: the model is small enough to bring up quickly, openly documented, and still useful for many automation, classification, and drafting tasks where raw reasoning depth matters less than latency and cost. Taalas reports on the order of 16k–17k decoded tokens per second per user for this configuration (vendor methodology and comparisons appear in their write-up), alongside claims of large gains in capital and power versus conventional GPU stacks for the same model class. First-generation parts use aggressive mixed low-bit storage; the firm describes moving toward standard 4-bit floating formats on HC2 to recover headroom on quality.

For developers who want to feel what that throughput class implies in practice, Taalas runs a free chatbot demo, Chat Jimmy, and offers API access through an application form on their site. It is explicitly a proof of concept — not a frontier assistant — but it illustrates a real audience that may prefer a modest model at “human cognition speed” over a larger model that feels sluggish or expensive.

Architecture of a transformer inference ASIC

What does a transformer-optimized chip actually look like under the hood?

+--------------------------------------+

| Host Interface |

| (PCIe / CXL / NVLink / Ethernet) |

+--------------------------------------+

| On-chip Interconnect (mesh/ring) |

+--------------------------------------+

| Compute Tiles / Cores |

| — Dense matrix multiply units |

| — Low-precision (int8/int4) ALUs |

| — Dequant / Activation units |

+--------------------------------------+

| On-chip SRAM & KV cache buffers |

| — Hot weights, fused caches |

+--------------------------------------+

| Quantization / Dequant Pipelines |

+--------------------------------------+

| Scheduler / Controller |

| — Static graph execution engine |

+--------------------------------------+

| Off-chip DRAM / HBM Interface |

+--------------------------------------+

Key architectural features include:

- Compute cores — Dense matrix-multiply units optimized for int8, int4, and ternary operations

- On-chip SRAM — Large buffers hold hot weights and KV caches, minimizing expensive DRAM accesses

- Streaming interconnects — Mesh topology enables efficient scaling across multiple chips

- Quantization engines — Real-time quantization/dequantization between layers

- Compiler stack — Translates PyTorch/ONNX graphs directly into chip-specific micro-ops

- Hardwired attention kernels — Eliminates control flow overhead for softmax and other operations

The design philosophy mirrors Bitcoin ASICs: every transistor serves the specific workload. No wasted silicon on features inference doesn’t need.

GPU versus ASIC benchmarks for LLM inference

Representative public figures show how specialized inference hardware can pull away from general-purpose GPU stacks on the same model families (always verify methodology and batching assumptions for your own workloads):

| Model | Hardware | Throughput (tokens/s) | Time to First Token | Performance Multiplier |

|---|---|---|---|---|

| Llama-2-70B | NVIDIA H100 (8x DGX) | ~80–100 | ~1.7s | Baseline (1×) |

| Llama-2-70B | Groq LPU | 241–300 | 0.22s | 3–18× faster |

| Llama-3.3-70B | Groq LPU | ~276 | ~0.2s | Consistent 3× |

| Gemma-7B | Groq LPU | 814 | <0.1s | 5–15× faster |

| Llama-3.1-8B | Taalas HC1 (vendor) | ~16k–17k decode t/s/user | — | Separate axis (fixed 8B graph, not 70B) |

Sources: Groq.com, ArtificialAnalysis.ai, NVIDIA Developer Blog; Taalas HC1 figures from the company’s product post.

The Groq-focused rows show large gains in throughput and time-to-first-token versus a high-end GPU baseline on large models. The Taalas row is not another multiplier against those 70B lines; it illustrates how far per-user decode can be pushed when the model and graph are fixed in silicon, at the cost of flexibility.

Trade-offs when you specialize inference silicon

Specialization buys performance, but it reintroduces product and engineering risk:

-

Flexibility vs. Efficiency. A fully fixed ASIC screams through today’s transformer models but might struggle with tomorrow’s architectures. What happens when attention mechanisms evolve or new model families emerge?

-

Quantization and Accuracy. Lower precision saves massive amounts of power, but managing accuracy degradation requires sophisticated quantization schemes. Not all models quantize gracefully to 4-bit or lower.

-

Software Ecosystem. Hardware without robust compilers, kernels, and frameworks is useless. NVIDIA still dominates largely because of CUDA’s mature ecosystem. New chip makers must invest heavily in software.

-

Cost and Risk. Taping out a chip costs tens of millions of dollars and takes 12–24 months. For startups, this is a massive bet on architectural assumptions that might not hold.

Still, at hyperscale, even 2× efficiency gains translate to billions in savings. For cloud providers running millions of inference requests per second, custom silicon is increasingly non-negotiable.

A wish-list spec for an LLM inference chip

| Feature | Ideal Specification |

|---|---|

| Process | 3–5nm node |

| On-chip SRAM | 100MB+ tightly coupled |

| Precision | int8 / int4 / ternary native support |

| Throughput | 500+ tokens/sec (70B model) |

| Latency | <100ms time to first token |

| Interconnect | Low-latency mesh or optical links |

| Compiler | PyTorch/ONNX → microcode toolchain |

| Energy | <0.3 joules per token |

Looking ahead (2026–2030)

Expect the inference hardware landscape to stratify into three coarse tiers:

-

Training Chips. High-end GPUs like NVIDIA B200 and AMD Instinct MI400 will continue dominating training with their FP16/FP8 flexibility and massive memory bandwidth.

-

Inference ASICs. Hardwired, low-precision transformer accelerators will handle production serving at hyperscale, optimized for cost and efficiency.

-

Edge NPUs. Small, ultra-efficient chips will bring quantized LLMs to smartphones, vehicles, IoT devices, and robots, enabling on-device intelligence without cloud dependency.

Beyond hardware alone, we’ll see:

- Hybrid clusters — GPUs for flexible training, ASICs (or wafer-scale inference engines) for efficient serving

- Inference-as-a-Service — Hyperscalers mixing first-party accelerators (AWS Inferentia, Google TPU, and others) with GPUs

- Hardware–software co-design — Models shaped for block sparsity, MoE routing, and quantization-friendly layers

- Per-model or per-family silicon — Firms like Taalas betting that some deployments will trade architectural flexibility for extreme cost and latency on a known graph

- Open inference APIs — Pressure to keep serving interfaces portable even when the silicon is not

Final thoughts

The “ASIC-ization” of AI inference is already underway. Just as Bitcoin mining evolved from CPUs to specialized silicon, AI deployment is following the same path.

The next revolution in AI won’t be about bigger models — it’ll be about better chips. Hardware optimized for the specific patterns of transformer inference will determine who can deploy AI economically at scale.

Just as Bitcoin miners optimized away every wasted watt, inference hardware will squeeze every last FLOP-per-joule. When that happens, the real breakthrough won’t be in the algorithms — it’ll be in the silicon running them.

The future of AI is being etched in silicon, one transistor at a time.

For more benchmarks, hardware choices, and performance tuning, check our LLM Performance: Benchmarks, Bottlenecks & Optimization hub.

Useful Links

- Groq Official Benchmarks

- Taalas — The path to ubiquitous AI (HC1, roadmap, philosophy)

- Chat Jimmy — Taalas Llama 3.1 8B demo

- Taalas API access request form

- Artificial Analysis - LLM Performance Leaderboard

- NVIDIA H100 Technical Brief

- Etched AI - Transformer ASIC Announcement

- Cerebras Wafer-Scale Engine

- NVidia RTX 5080 and RTX 5090 prices in Australia - October 2025

- LLM Performance and PCIe Lanes: Key Considerations

- Large Language Models Speed Test

- Comparing NVidia GPU suitability for AI

- Is the Quadro RTX 5880 Ada 48GB Any Good?