OpenClaw Rise and Fall — Timeline and Real Reasons Behind the Collapse

OpenClaw rose fast. Then vanished faster.

OpenClaw did not fail as a product. It lost its fuel.

What looks like a dramatic boom and collapse is actually something more mechanical and more interesting. OpenClaw was a thin layer on top of a temporary economic advantage in the AI ecosystem. Once that advantage disappeared, so did the attention.

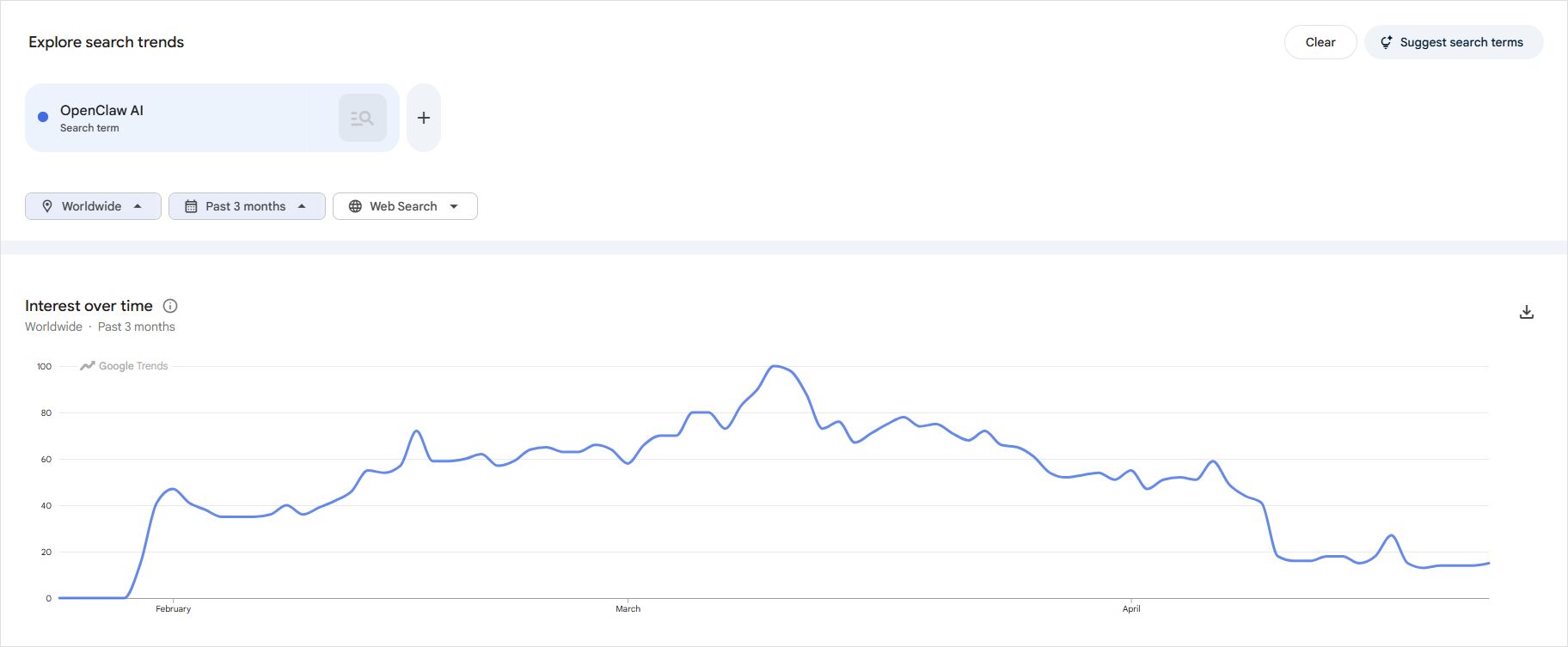

Here is the OpenClaw AI google trend graph.

This article breaks down the exact timeline, the real drivers behind the spike, and why the drop was inevitable.

The illusion of product-driven growth

Most people assume OpenClaw grew because it was a great AI agent — and that is only partially true.

OpenClaw was genuinely useful. It supported more than 50 integrations, worked across Claude, GPT-4o, Gemini, and DeepSeek, and attracted enterprise adoption — Tencent built a platform directly on top of it. But capability alone did not set it apart from comparable alternatives:

- Cline

- LangChain-based setups

- Other agent wrappers

The real driver was access rather than capability — a distinction that explains the entire arc of OpenClaw’s rise and collapse.

OpenClaw made powerful models cheap to use at scale.

Phase 1. Quiet emergence (November 2025)

The story begins in November 2025, when Peter Steinberger built the first prototype in roughly one hour. He was annoyed that the tool did not exist yet, so he built it, calling it Clawdbot — a nod to Anthropic’s Claude, complete with a lobster mascot.

The first version was practical rather than flashy: an AI agent that could manage calendars, check email, book appointments, and automate computer tasks on the user’s behalf. Steinberger shared it in developer communities and early adopters recognized something promising, though growth at this stage remained slow and organic with no visibility outside technical circles.

Phase 2. The viral ignition (January–February 2026)

The spike began when several forces aligned in quick succession.

1. Naming drama and forced rebrands

In late January 2026, Anthropic sent Steinberger a trademark notice over “Clawdbot,” citing phonetic similarity to “Claude.” By his account, Anthropic handled it professionally — but the notice forced a rename. The project became Moltbot for three days, then OpenClaw, and the forced rebranding generated exactly the kind of attention that marketing budgets cannot buy.

2. The agent hype wave

The market was already primed for an agent breakthrough:

- autonomous agents were trending across social media and the tech press

- “AI that can act” had become the dominant narrative

- developers were actively searching for tools that could automate complex workflows

OpenClaw arrived at exactly the right moment, when demand for this kind of tool was at its highest and the story of autonomous AI agents was capturing mainstream attention.

3. The cheap compute loophole

The most decisive factor was a compute pricing loophole that no amount of good engineering could have manufactured deliberately.

Users discovered that OpenClaw could connect to Claude by grabbing the OAuth token from a Claude Pro or Max subscription and spoofing the authentication headers of Anthropic’s own Claude Code client. Instead of paying per token through the API, they effectively got:

near unlimited agent execution for a fixed monthly cost

The numbers made this explosive. A Claude Max subscription cost $200 per month, while running equivalent workloads through the API would cost far more — industry analysts estimated a price gap of more than five times, meaning Anthropic was quietly subsidising each heavy OpenClaw user by hundreds of dollars a month.

This changed behavior instantly:

- developers ran heavy experiments they would never have attempted at API prices

- viral demos flooded social media

- large-scale automation became accessible to solo developers

Nothing in the software changed — the economics did, and that shift alone was enough to ignite a viral adoption curve. By March 2, 2026, the OpenClaw repository had accumulated 247,000 GitHub stars and 47,700 forks, reaching 100,000 stars in under 48 hours — a pace widely described as the fastest-growing GitHub project in history.

Phase 3. Peak usage and inflated expectations

At peak interest, developers pushed agents to extremes, social media amplified the results, and expectations exploded around what personal AI automation could achieve. An estimated 135,000 OpenClaw instances were running simultaneously when Anthropic made its announcement, and one founder described publicly how she had deployed nine separate AI agents to manage her administrative work and personal household logistics.

Why do AI tools suddenly become popular and then fade

Because the initial spike is driven by novelty and perceived leverage. Once users test the limits, reality sets in — the tool proves harder to use reliably, and the economic conditions that made it attractive often turn out to be temporary. In OpenClaw’s case, the perceived leverage was real but built on borrowed economics that Anthropic had not priced for agentic workloads.

The creator leaves for OpenAI (February 2026)

Before the collapse arrived, OpenClaw lost its original architect.

On February 14–15, 2026, Steinberger announced he was leaving the project to join OpenAI. Sam Altman posted that Steinberger would “drive the next generation of personal agents” at the company, and Steinberger wrote that “teaming up with OpenAI is the fastest way to bring this to everyone.” OpenClaw was transferred to an independent open-source foundation with OpenAI’s continued support.

The timing was striking. Anthropic had declined to hire or partner with Steinberger, despite the fact that his tool had become arguably their best free marketing in years — a project built explicitly to showcase how good Claude was. Instead, he went directly to their biggest competitor, taking with him both the project’s momentum and its community relationships.

Phase 4. The correction begins

Two things started happening at the same time.

1. Reality of agent limitations

Users who had deployed OpenClaw at scale began encountering its real constraints:

- agents are brittle and fail unpredictably on multi-step tasks

- reliability is inconsistent across different workflows and environments

- setup and maintenance is non-trivial for most users outside technical circles

These limitations alone would have caused a gradual decline, but OpenClaw did not taper off gradually — it dropped sharply, because a second and more decisive force hit at exactly the same time.

2. The economic layer breaks

Anthropic had already run this playbook once. In January 2026, just weeks before OpenClaw peaked, they blocked OpenCode — another popular third-party coding client — from using Claude subscription tokens in what was framed as a terms of service violation, not a capacity issue. OpenClaw users had every reason to expect the same treatment, and that moment arrived in April.

Anthropic then introduced restrictions that closed the loophole entirely:

- third-party tools were blocked from using subscription OAuth tokens

- usage shifted to pay-as-you-go extra billing or full API keys

This removed the key advantage:

cheap large-scale execution

Now users faced a very different cost structure:

| Metric | Before cutoff | After cutoff |

|---|---|---|

| Monthly plan cost | $20–$200 (flat) | $20–$200 + usage |

| Cost per task | Effectively $0 | $0.50–$2.00 |

| API rate (Sonnet 4.6 input) | Covered by sub | $3 per million tokens |

| API rate (Sonnet 4.6 output) | Covered by sub | $15 per million tokens |

| Increase for heavy users | — | 10× to 50× |

What caused the sudden drop in interest in AI agent tools

The answer is straightforward: not a lack of innovation, but the loss of affordable compute. Once the pricing floor disappeared, the incentive to experiment and share disappeared with it, and search interest followed almost immediately.

April 4, 2026 — The hard cutoff

On April 4, 2026, at 12 PM Pacific Time, the subscription access ended for all third-party tools.

Boris Cherny, Head of Claude Code at Anthropic, posted on X that Claude Pro and Max subscriptions would no longer cover usage from third-party tools, effective immediately. An Anthropic spokesperson confirmed that using subscriptions with third-party tools was always against the terms of service, and that those tools were placing “an outsized strain on our systems.” Additional context made the timing feel urgent: on April 1, the full source code of Claude Code — 512,000 lines of TypeScript — had leaked through an npm package, exposing exactly how Anthropic’s first-party tools authenticated with the backend and making it more pressing to lock down third-party tools that were spoofing those same patterns.

Anthropic offered a one-time credit equal to one month’s subscription fee and a 30% discount on pre-purchased usage bundles to ease the transition. For light users, the credit covered the adjustment period, but for power users running multiple instances the new numbers simply did not work. The effect on activity was immediate:

- experimentation stopped

- viral sharing disappeared

- search interest collapsed

This matches the sharp drop in Google Trends almost perfectly. The full policy mechanics and migration options after the cutoff are covered in Claude, OpenClaw, and the End of Flat Pricing for Agents.

OpenAI moves in the opposite direction

On the same day as the Anthropic ban, OpenAI publicly confirmed that ChatGPT Plus, Pro, and Team subscribers were entirely free to use their subscriptions to power OpenClaw through OAuth — including with models like GPT-5.3 Codex for complex coding tasks.

This was not accidental timing. By hiring Steinberger and explicitly opening their subscription gates, OpenAI positioned themselves as the developer-friendly alternative at the exact moment Anthropic cut off its most active community, securing the loyalty of the developers who were building the next generation of AI tools.

Phase 5. Where OpenClaw users actually went

Users did not disappear after the ban — they redistributed across a spectrum of alternatives depending on their technical depth and budget.

Direct usage of chat assistants

Many users moved back to direct chat interfaces, trading agent automation for the simplicity and reliability they had given up:

- ChatGPT

- Claude UI

- Gemini

Are AI agents replacing traditional chat assistants

No — for most users, agents add complexity without enough reliability gains. The chat interface remains the default for daily use because it is faster to start, easier to debug when something goes wrong, and requires no infrastructure setup. Agents serve a committed minority of power users, not the general population. The AI developer tools ecosystem has evolved to fill this gap with tools that sit between raw agents and simple chat, giving developers structured assistance without full agentic overhead.

Cheaper model ecosystems

Power users with the technical ability to self-host migrated toward lower-cost alternatives:

- Qwen

- DeepSeek

- other low-cost models accessible through Ollama for fully local setups

Which models are popular for low-cost AI experimentation

Models that offer lower pricing, fewer usage restrictions, and flexible deployment including local self-hosting absorbed the bulk of displaced OpenClaw power users. These ecosystems grew quietly rather than generating public hype, which is why the migration was largely invisible in trend data even as it represented a significant redistribution of compute demand.

Alternative agent frameworks

Developers who still needed agent capabilities switched to leaner approaches:

- custom scripts tailored to specific workflows

- lightweight frameworks with fewer dependencies

- self-hosted solutions combining local models with minimal tooling

The key difference from OpenClaw is that these users optimized for cost and control rather than convenience, and built for sustainability rather than maximum automation at minimum price. This is the pattern common across the self-hosted AI systems ecosystem — provider independence treated as a design requirement, not an afterthought.

The overlooked factor — why cost is the real product

The most important insight from OpenClaw’s trajectory is that cost functions as the real product in AI adoption.

Why is cost important in AI adoption

Because usage scales non-linearly with compute costs. When compute is cheap, experimentation explodes, innovation accelerates, and attention grows because viral sharing becomes economically rational. When compute becomes expensive, usage contracts to serious workflows only, casual users leave, and hype disappears almost overnight — which is precisely why token optimization and cost reduction strategies become critical skills once compute stops being subsidized.

OpenClaw demonstrated this rule in an unusually clear form: between February and April 2026, the software did not change, but the economics of running it did — and that single shift was enough to collapse the community in a matter of days.

OpenClaw was never the core story

OpenClaw functioned as a surface layer on top of more fundamental forces.

The real story involved three factors operating simultaneously:

- access to Claude models at subscription prices rather than API rates

- a five-to-one pricing mismatch between what users paid and what usage actually cost Anthropic

- a policy correction that had to happen eventually given the scale of that mismatch

Once those underlying conditions changed, any tool that depended on them would show the same pattern — which is exactly why similar tools spiked and declined in lockstep, regardless of their individual quality or feature sets. Anthropic’s decision also revealed something strategic: by blocking third-party clients while protecting Claude Code, the company chose to concentrate developer engagement inside its own first-party tooling at a moment when independent communities were iterating faster than any centralized lab.

The pattern repeats across AI

OpenClaw’s trajectory is not unique — the same cycle has played out repeatedly across the AI ecosystem.

The same pattern appears in AutoGPT, BabyAGI, and other early agent frameworks that attracted massive attention and then faded as compute costs, reliability limits, or platform restrictions were enforced. The cycle is consistent:

- New capability appears

- Cheap or free usage emerges

- Viral experimentation begins

- Costs or limits are enforced

- Attention collapses

Each cycle leaves behind a smaller, more committed user base and a clearer understanding of what actually works at scale — which is how progress compounds even through the boom-and-bust pattern.

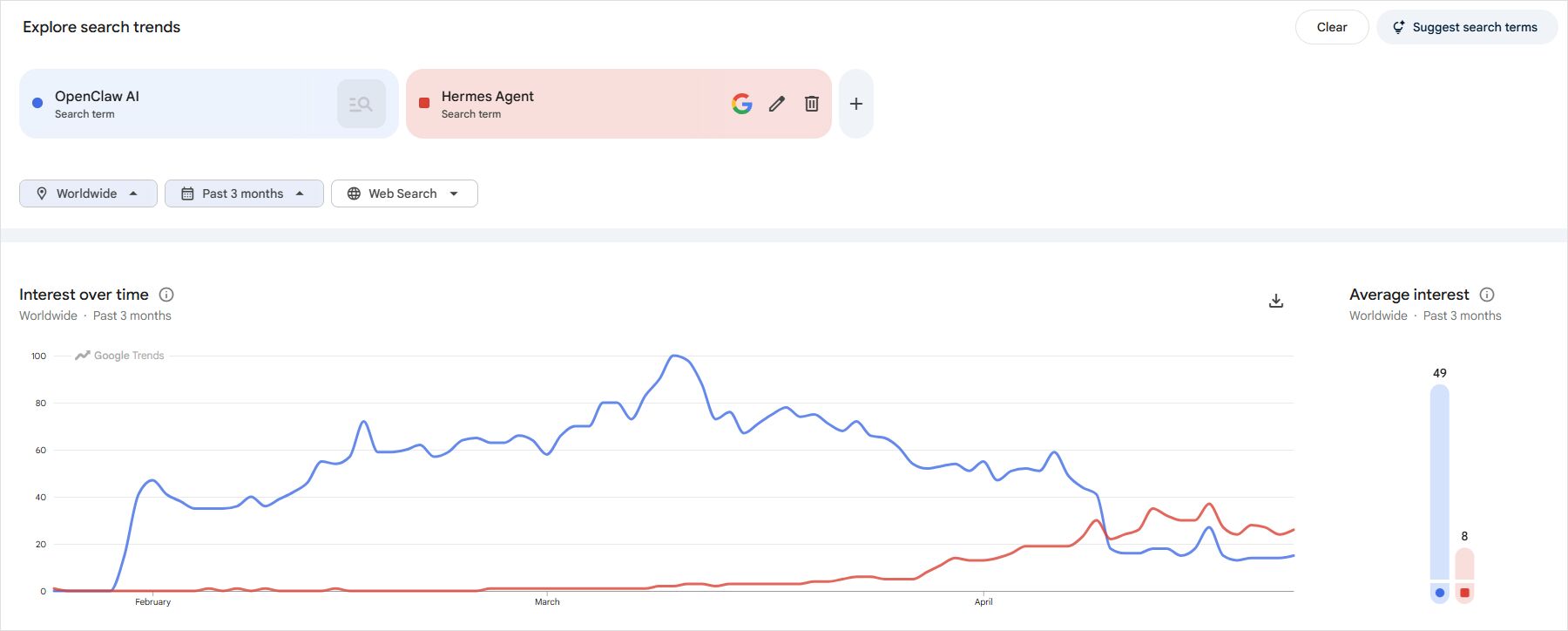

OpenClaw vs Hermes Agent — what the trend data shows

The chart above compares worldwide Google Trends search interest for OpenClaw AI (blue) and Hermes Agent (red) over the past three months. OpenClaw peaked at an index of 100 in mid-March 2026 and collapsed sharply in April after the subscription cutoff. Hermes Agent barely registered during OpenClaw’s peak, then gradually picked up interest as OpenClaw faded — reaching an index of around 40 in bursts through April, compared to OpenClaw’s average of 49 and Hermes’s average of 8.

Hermes Agent is an open-source framework built by Nous Research and released in February 2026. Unlike OpenClaw, which is optimized for broad reactive tool use across many integrations, Hermes is built around a learning loop: it generates reusable skills from successful task completions, refines them through continued use, and maintains a persistent model of the user across sessions. The result is an agent that improves the more it is used on the same task types, rather than approaching each job from the same baseline. It reached 95,600 GitHub stars in its first seven weeks.

The gap in the chart is significant. OpenClaw’s hype surplus did not transfer to Hermes — it evaporated. Casual experimenters who had been running agents cheaply on Claude subscriptions simply left the space rather than migrating to an alternative. The users who did move to Hermes were the committed technical minority who needed persistent, self-hosted automation and were willing to set it up properly — which is exactly the kind of smaller, more sustainable user base that remains after every AI hype cycle collapses. For those users, Hermes production setup patterns are worth exploring.

Final takeaway — follow the economics, not the interface

OpenClaw did not rise because it was revolutionary — it rose because it unlocked something temporarily underpriced, and it fell not because it failed as a product but because that pricing advantage was removed by the platform it depended on.

This was not a product lifecycle. It was a pricing event.

Understanding this distinction is critical for predicting the next spike in AI tooling. The same pattern will repeat whenever a new compute subsidy appears, whether through a subscription loophole, a generous free tier, or a new open-weight model that undercuts established pricing. Track where compute is temporarily cheap and you will find the next wave of viral AI tools before the hype arrives.