Chunking Strategies in RAG Comparison: Alternatives, Trade‑offs, and Examples

Comparison of Chunking Strategies in RAG

Chunking is the most under-estimated hyperparameter in Retrieval ‑ Augmented Generation (RAG): it silently determines what your LLM “sees”, how expensive ingestion becomes, and how much of the LLM’s context window you burn per answer.

This article treats chunking as an engineering optimisation problem: define goals, pick a strategy, measure, then iterate.

If you’re new to RAG architecture, start with the main Retrieval-Augmented Generation (RAG) Tutorial: Architecture, Implementation, and Production Guide.

TL;DR (Executive summary)

RAG systems retrieve chunks, not documents. Chunking therefore defines the unit of retrieval, the unit of embedding cost, and the unit of evidence you can show or cite. In the original RAG formulation, retrieval supplies passages to generation; the passage boundaries are effectively your chunk boundaries.

A good chunking strategy seeks a Pareto frontier across: retrieval quality (recall/precision of evidence), coherence (chunks must be interpretable), and cost (embedding, storage, and query latency). There is no globally optimal chunk size or method, and production systems routinely mix strategies (e.g. structure-aware chunking for PDFs + semantic-aware splits for prose + AST chunking for code).

For most “documentation QA” and internal knowledge bases, a safe default is a structure-respecting recursive splitter with modest overlap (to reduce boundary loss), backed by a

vector store

with metadata filtering and optional reranking. LangChain’s RecursiveCharacterTextSplitter is a common implementation of this hierarchical-separator idea; overlap specifically exists to mitigate information loss when relevant context gets cut at boundaries.

When documents have strong structure (PDFs with headings, tables, lists, captions), element-based / structure-aware chunking can outperform token-count slicing while producing fewer chunks. A 2024 study on SEC filings found element-type-based chunking improved RAG results, and also reduced the number of chunks (and therefore vectors) roughly by half compared to structure-agnostic methods—cutting indexing cost and potentially improving query latency.

If you can afford more up-front compute, semantic chunking (split at topic shifts using embedding similarity) can materially improve retrieval fidelity for narrative text and mixed-topic pages. Older topic segmentation algorithms like TextTiling show the general principle: strong vocabulary/semantic shifts are good boundary candidates.

For very long, internally cross-referential material (policies, RFCs, standards, large manuals), hierarchical chunking + hierarchical retrieval/merging (parent/child nodes) can recover larger contiguous context on demand. LlamaIndex’s hierarchical node parser produces coarse-to-fine chunk hierarchies, and the AutoMergingRetriever can merge leaf nodes into parent nodes at retrieval time when enough related children are retrieved.

Chunking goals and trade-offs

Chunking is not just “split text so it fits into an embedding model”. It controls multiple downstream and operational behaviours.

Retrieval granularity vs retrieval noise. Smaller chunks increase the chance that the exact sentence containing an answer is retrievable (higher potential recall at fixed top‑k). But they also produce more vectors, increasing index size and sometimes surfacing “near matches” that are semantically similar but not actually evidential (lower precision). Dense retrievers like DPR were built around retrieving passages effectively for QA, highlighting that passage boundaries matter for end-to-end QA performance.

Context coherence vs boundary loss. Coherent chunks help the LLM reason correctly and reduce hallucinations by providing complete local context (definitions, constraints, prerequisites). Overlap reduces boundary loss but creates duplicate text, which can lead to redundant retrieval results and inflated prompt length if you don’t deduplicate/merge.

Embedding and indexing cost. Embedding cost is typically proportional to tokens embedded, and ingestion time scales with number of chunks (plus vector DB write overhead). For OpenAI embeddings, requests have a per-input max token limit (8192 tokens for all embedding models) and a max total tokens summed across inputs per request (300,000 tokens). For large corpora, the Batch API can reduce costs by ~50% with an asynchronous, 24‑hour turnaround—useful for backfills and periodic re-indexing.

Vector index size, RAM, and latency. More chunks means more vectors and potentially more memory and slower queries (depending on index type). FAISS explicitly frames index design as a set of trade-offs among search time, search quality, and memory per indexed vector; it also offers GPU implementations for fast exact and approximate search.

Downstream LLM prompt length / context window usage. The retriever’s output becomes prompt budget. A chunking strategy that consistently retrieves “just enough” context can improve answer quality and reduce cost. Conversely, overlap and too-large chunks inflate prompt length. In practice, you often tune: (chunk size, overlap, top‑k, reranking/merging) together.

Update/ingest cost and deduplication. Chunking affects how expensive it is to refresh data. Smaller chunks make partial updates cheaper (you can re-embed only the changed section) but also make deduplication harder if overlapping or near-duplicate chunks proliferate.

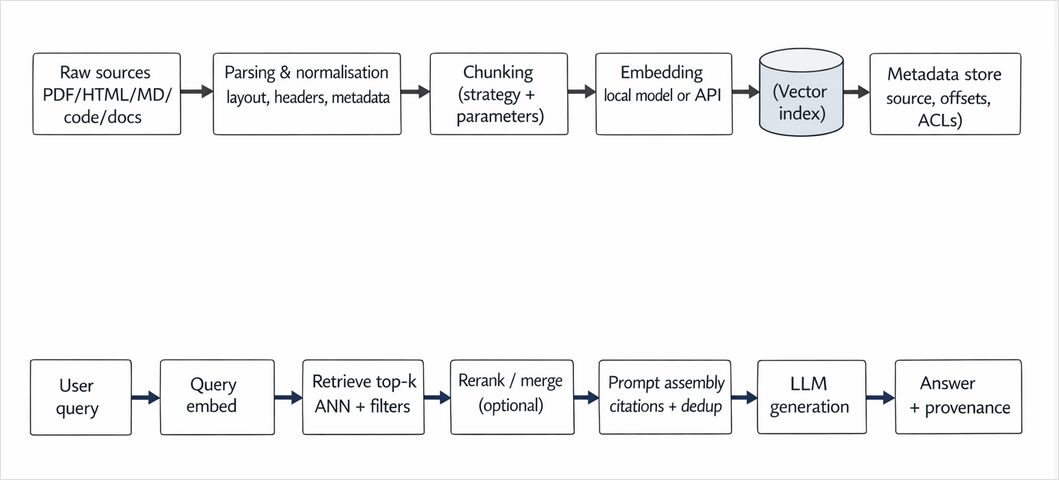

Where chunking sits in the RAG workflow

Chunking strategies and alternatives

Below are the main chunking families you’ll encounter in modern RAG. In practice, you’ll often blend two: structure-first chunking (respect document boundaries) plus token-budget enforcement (ensure chunks fit your embedding and prompt budgets).

Fixed-size chunking

What it is. Split text into equal-sized blocks by characters or tokens.

Why it exists. It’s simple, fast, predictable, and easy to parallelise. It is also the easiest strategy for streaming ingestion where you don’t have full document context.

Where it fails. It ignores boundaries (sentences, sections, code blocks) so it can break definitions or split “question/answer pairs” across chunks, increasing retrieval error.

Operational profile. Lowest ingest complexity; predictable chunk count; easiest caching. But you usually need overlap (below) to avoid boundary loss.

Overlap chunking

What it is. Any strategy where consecutive chunks share a fixed overlap region (e.g. 10–20% of chunk size). Overlap is standard in many frameworks because it reduces information loss when context is divided.

Why it matters. Overlap is effectively a “soft boundary”—it lets retrieval capture a fact that straddles a boundary.

Costs and pitfalls. More tokens embedded; more duplicate text in the index; higher risk of retrieving multiple nearly identical chunks unless you deduplicate at retrieval time (e.g., merge by source offsets or use MMR).

Sentence- and paragraph-based chunking

What it is. Split text at sentence or paragraph boundaries, then pack sentences/paragraphs into chunks up to a token budget.

Why engineers like it. It improves coherence for natural language and is robust for docs with conventional punctuation and spacing.

Tooling. NLTK’s sent_tokenize() uses Punkt sentence boundary detection by default, and spaCy offers rule-based sentence boundary tools like Sentencizer (useful when you want sentence splits without a full dependency model).

Failure modes. Non-standard punctuation (logs, chat transcripts), tables, code, and bullet lists can break sentence segmentation assumptions.

Sliding window chunking

What it is. Create chunks using a fixed window size and a step (stride). This is the “systematic overlap” version of chunking.

When it’s good. Time series text, transcripts, chat logs, meeting minutes—anything where relevant facts can appear across local neighbourhoods and you want robust recall.

When it’s bad. It amplifies redundancy and can be expensive at scale. It also tends to retrieve redundant windows unless deduped.

Recursive / separator-hierarchy chunking

What it is. Start with large “natural” separators (e.g. \n\n for paragraphs) and recursively split into smaller units (sentences, spaces) only when needed to stay under a size budget. LangChain documents this behaviour explicitly: a recursive splitter tries to keep larger units intact and only falls back to smaller separators when a unit still exceeds chunk size.

Why it’s a strong default. It respects structure without requiring complex document parsing. It’s a pragmatic sweet spot for Markdown, HTML-as-text, and documentation.

Key tuning knobs. chunk_size, chunk_overlap, and the length_function (characters vs tokens), plus custom separators for multi-language codebases.

Semantic (embedding-aware) chunking

What it is. Detect topic shifts using semantic representations (embeddings) and split where similarity drops. This mirrors classic segmentation ideas like TextTiling, which uses shifts in lexical cohesion to find subtopic boundaries.

Why it can outperform size-based chunking. You stop splitting at arbitrary token counts and instead align chunks with topic boundaries—often improving retrieval precision for multi-topic documents (blogs, design docs, tickets, incident reports).

Costs. You may need additional embeddings during chunking (sentence-level or paragraph-level embeddings) before final chunk embeddings. That can double or triple embedding calls unless you reuse intermediate embeddings.

Practical trick. “Semantic-aware packing”: compute sentence embeddings once, group sentences into topic-coherent segments, then embed each final segment.

Hierarchical chunking (parent/child)

What it is. Build a multi-granularity representation: coarse parent chunks (e.g., section-sized) with finer child chunks (e.g., paragraph-sized). LlamaIndex’s hierarchical node parsing produces “coarse-to-fine” hierarchies by default (e.g., 2048 → 512 → 128 token scales), and the AutoMergingRetriever can merge child nodes into parents at retrieval time when enough related children are retrieved.

Why it helps. It avoids choosing between “small chunks for recall” and “big chunks for coherence” by storing both, and selecting at query time.

Costs. More complex ingestion and retrieval logic, plus potentially more storage (because you store multiple granularities).

Adaptive / LLM-based chunking

What it is. Use an LLM to decide chunk boundaries (and optionally generate summaries or contextual headers). Weaviate explicitly describes LLM-based chunking as having the LLM create semantically coherent chunks, instead of relying on fixed rules or embedding similarity.

When it’s worth it. High-value corpora where correctness dominates cost (legal, compliance, support runbooks), and where documents are messy, heterogeneous, and poorly segmented.

Risks. Cost, latency, and nondeterminism. You’ll want caching, deterministic decoding, and regression tests (see evaluation section).

Structure- and element-based chunking (documents are not plain text)

What it is. Parse the document into elements (titles, paragraphs, lists, tables, captions) using a document understanding layer, then chunk using those elements. Unstructured’s chunking functions explicitly use metadata and document elements (produced by partitioning) to produce chunks for RAG. Docling’s HierarchicalChunker creates chunks per detected document element and attaches structural metadata such as headers/captions.

Evidence from recent work. A 2024 study on SEC filings argues paragraph-only chunking neglects document structure and proposes chunking by structural elements; it reports improved RAG results and fewer chunks/vectors than structure-agnostic approaches.

Why it matters for multimodal. Tables, figures, and captions often contain the ground truth. “Flattening” them into plain text can destroy signals that retrieval would otherwise exploit.

Code-aware chunking (AST/structure)

What it is. Chunk code by syntactic units (functions, classes, modules), optionally including docstrings and comments.

Why it matters. Fixed-size token splits tend to cut functions in half and separate docstrings from implementations—bad for code search and “explain this function” RAG use cases.

Implementation options. For Python, the built-in ast module is often enough. For multi-language repos, tree-sitter-based chunkers are common.

Evaluation dimensions and how to compare chunking strategies

Chunking should be benchmarked as a system component.

Retrieval quality metrics

Use standard IR metrics for the retrieval layer:

- Recall@k / Precision@k: Did the top‑k contain the gold evidence?

- MRR / nDCG: Did the gold evidence rank high?

BEIR is a widely used heterogeneous benchmark for IR evaluation across tasks/domains, and highlights trade-offs among sparse, dense, late-interaction, and reranking approaches.

Chunking affects these metrics because it defines what counts as “a relevant retrieved item”.

End-to-end RAG answer quality metrics

If you’re building QA or assistants, retrieval metrics are necessary but not sufficient. You also need:

- Context recall / precision: whether retrieved contexts contain relevant evidence and avoid noise.

- Faithfulness: whether the generated answer is supported by the retrieved context.

RAGAS provides concrete definitions and implementations for “faithfulness” and other RAG-oriented metrics.

System cost and performance dimensions

Chunking changes these levers:

Latency (p50/p95). Query latency usually increases with more vectors and more post-processing. Your vector index also matters: FAISS index types trade off search time, quality, memory, and training/adding time.[^faiss]

Embedding cost and throughput. OpenAI embeddings are billed per tokens; the embeddings API has explicit per-input and per-request limits.[^openai_embed_create] For offline ingestion, the Batch API reduces cost and offers higher quota in exchange for non-real-time turnaround.[^openai_batch]

Index size and memory. Roughly, storing N float32 vectors of dimension d costs ~4 * N * d bytes just for the raw vectors (plus metadata + index overhead). Chunking impacts N. Embedding dimensionality impacts d, and OpenAI’s embeddings API allows controlling output dimensionality via the dimensions parameter.[^openai_embed_create]

LLM prompt budget. Bigger chunks and overlap inflate prompt tokens. This can increase latency and cost, and increase “lost in the middle” style failure modes where models pay less attention to some context. In practice you often:

- retrieve small chunks,

- merge/deduplicate,

- optionally summarise,

- send a compact evidence set to the LLM.

Update/ingest cost. Smaller chunks allow partial re-embedding but increase bookkeeping. For streaming ingestion, prefer deterministic, incremental chunking (fixed or sliding window) and attach stable IDs (document_id, offset ranges, hash).

Experimental design: a pragmatic benchmark loop

A reproducible chunking benchmark typically has:

- A fixed corpus snapshot + fixed set of queries with gold evidence (or at least expected answer spans).

- A fixed embedding model and vector index configuration.

- A “retrieval-only” evaluation (recall@k, nDCG) plus “RAG” evaluation (faithfulness, answer relevance).

- Cost telemetry: #chunks, embedded tokens, $/month storage, p95 query latency, prompt tokens.

The Unstructured SEC-filings paper is a good example of evaluating chunking strategies with both retrieval-oriented metrics and QA accuracy measures.

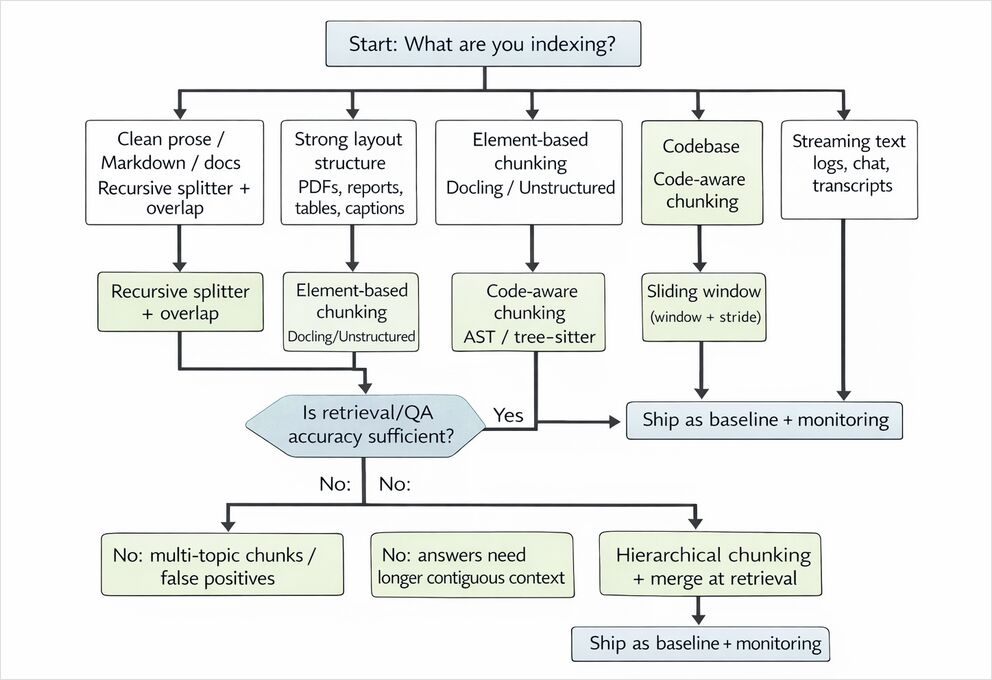

Practical guidelines, decision matrix, and recommended defaults

Recommended defaults that work surprisingly well

If you need a robust “day 1” strategy for general documentation QA:

- Parse lightly: preserve headings and basic metadata (source, section title, URL/path, timestamp).

- Chunk with a recursive separator splitter (paragraph → sentence → word), with modest overlap.

- Embed with a strong general embedding model.

- Index with metadata (doc id, section, ACLs) and deduplicate during retrieval.

- Add reranking or hierarchical merging only if your evaluation shows a gap.

This aligns with how common RAG frameworks describe chunk overlap and structure-respecting splitting.

Which chunking method to use - Decision matrix

| Use case | Recommended chunking default | Key parameters to tune | Common failure mode | Upgrade path |

|---|---|---|---|---|

| Short-form QA over docs (FAQs, internal wiki) | Recursive/separator chunking + overlap | chunk_size, overlap, top‑k |

Missing cross-sentence evidence at boundary | Add semantic chunking or reranker |

| Long-form QA (policies, standards, manuals) | Hierarchical chunking + merging retriever | parent/child sizes, merge threshold | Retrieves small fragments; LLM lacks full context | Auto-merging/hierarchical retrieval |

| Summarisation (per doc / per section) | Structure-aware chunks (sections) | section detection, max tokens | Summaries miss cross-section links | Hierarchical summarisation + section graph |

| Code search & “explain this function” | AST/function-level chunks | include docstring/comments, max tokens | Function split; loses signature/usage | Repo-aware hierarchy (module→class→func) |

| Multimodal PDFs (tables/figures) | Element-based chunking (title/table/caption aware) | table serialisation, caption merging | Table content lost or mangled | Use Docling/Unstructured + structured serialisers |

| Streaming ingestion (logs, chats, tickets) | Sliding window or fixed-size tokens | window, stride, dedup | Over-retrieval of redundant windows | Add semantic boundary detection on batches |

chunking - Qualitative performance comparison

Treat this as “expected direction of change” (validate with your own data).

| Strategy | Retrieval accuracy potential | Coherence of retrieved context | Ingest complexity | Vector count / index size | Embedding cost | Query latency impact | Best for |

|---|---|---|---|---|---|---|---|

| Fixed-size (no overlap) | Medium | Low | Low | Medium | Low | Medium | quick prototypes, homogeneous text |

| Fixed-size + overlap | Medium–High | Medium | Low | High | Medium–High | Medium–High | QA where boundary loss hurts |

| Sentence/paragraph packing | High (prose) | High | Medium | Medium | Medium | Medium | docs, articles, clean prose |

| Sliding window | High recall | Medium | Medium | Very high | Very high | High | transcripts, logs, chat |

| Recursive/separator | High | High | Medium | Medium | Medium | Medium | “default” docs RAG |

| Semantic chunking | High–Very high | High | High | Medium | High | Medium | multi-topic pages, narrative text |

| Hierarchical (parent/child) | Very high | Very high | High | High | High | Medium | long manuals / standards |

| LLM-based/adaptive | Very high | Very high | Very high | Medium | Very high | Medium–High | high-stakes corpora |

| Element-/structure-based | High–Very high | High | High | Low–Medium | Medium | Medium | PDFs, reports, tables, mixed layouts |

| Code-aware (AST) | High (code) | High | Medium | Medium | Medium | Medium | code search, repo assistants |

DevOps and hardware notes (often overlooked)

Chunking choices affect how much infrastructure you need:

- Smaller chunks → more vectors → larger indexes and more RAM/disk. For self-hosted FAISS, this can force sharding or disk-backed indexes.

- If you embed locally, embedding throughput becomes a GPU scheduling problem; if you embed via API, token volume becomes a FinOps problem (Batch API is your friend for backfills).

- Some engines (FAISS) provide GPU-accelerated search; this can shift cost from RAM-bound CPU to GPU memory and PCIe throughput.

- Structure-aware parsing (PDF layout, OCR, table extraction) is often CPU-bound and can dwarf embedding cost for scanned docs; budget it separately.

Chunking - Python reference implementations

All examples are designed to be readable and runnable. If you need an API key or a running DB, It is clear from the code.

Shared utilities: token counting and stable chunk IDs

from __future__ import annotations

import hashlib

from dataclasses import dataclass

from typing import Any, Iterable, Optional

from transformers import AutoTokenizer # pip install transformers

@dataclass(frozen=True)

class Chunk:

text: str

meta: dict[str, Any]

def sha1_id(*parts: str) -> str:

h = hashlib.sha1()

for p in parts:

h.update(p.encode("utf-8"))

h.update(b"\x1e")

return h.hexdigest()

# Use any tokenizer that roughly matches your LLM/embedding tokenisation.

TOKENIZER = AutoTokenizer.from_pretrained("bert-base-uncased")

def token_len(text: str) -> int:

return len(TOKENIZER.encode(text, add_special_tokens=False))

Fixed-size token chunking

def chunk_fixed_tokens(

text: str,

*,

chunk_size: int = 512,

) -> list[Chunk]:

token_ids = TOKENIZER.encode(text, add_special_tokens=False)

out: list[Chunk] = []

for i in range(0, len(token_ids), chunk_size):

window = token_ids[i : i + chunk_size]

chunk_text = TOKENIZER.decode(window)

out.append(

Chunk(

text=chunk_text,

meta={"strategy": "fixed_tokens", "start_token": i, "end_token": i + len(window)},

)

)

return out

Fixed-size with overlap + sliding window

def chunk_sliding_window(

text: str,

*,

window_tokens: int = 512,

stride_tokens: int = 384, # smaller stride = more overlap

) -> list[Chunk]:

assert 1 <= stride_tokens <= window_tokens, "stride must be within (0, window]"

token_ids = TOKENIZER.encode(text, add_special_tokens=False)

out: list[Chunk] = []

start = 0

while start < len(token_ids):

end = min(start + window_tokens, len(token_ids))

window = token_ids[start:end]

out.append(

Chunk(

text=TOKENIZER.decode(window),

meta={"strategy": "sliding_window", "start_token": start, "end_token": end},

)

)

if end == len(token_ids):

break

start += stride_tokens

return out

Sentence-based chunking (NLTK) with token-budget packing

# pip install nltk

import nltk

from nltk.tokenize import sent_tokenize

nltk.download("punkt", quiet=True)

def chunk_by_sentences_nltk(

text: str,

*,

max_tokens: int = 512,

overlap_sentences: int = 1,

) -> list[Chunk]:

sents = [s.strip() for s in sent_tokenize(text) if s.strip()]

out: list[Chunk] = []

buf: list[str] = []

buf_tokens = 0

def flush():

nonlocal buf, buf_tokens

if not buf:

return

chunk_text = " ".join(buf).strip()

out.append(

Chunk(

text=chunk_text,

meta={"strategy": "sentences_nltk", "sent_count": len(buf)},

)

)

# Overlap by keeping last N sentences

if overlap_sentences > 0:

buf = buf[-overlap_sentences:]

buf_tokens = token_len(" ".join(buf))

else:

buf, buf_tokens = [], 0

for s in sents:

s_tokens = token_len(s)

# If a single sentence exceeds budget, fall back to fixed-size chunking on that span

if s_tokens > max_tokens:

flush()

out.extend(chunk_fixed_tokens(s, chunk_size=max_tokens))

continue

if buf_tokens + s_tokens > max_tokens and buf:

flush()

buf.append(s)

buf_tokens += s_tokens

flush()

return out

Sentence-based chunking (spaCy) when you want rule-based or model-based SBD

# pip install spacy

# python -m spacy download en_core_web_sm

import spacy

def chunk_by_sentences_spacy(

text: str,

*,

max_tokens: int = 512,

) -> list[Chunk]:

# For lightweight rule-based splits (no dependency parse), use sentencizer.

nlp = spacy.blank("en")

nlp.add_pipe("sentencizer") # rule-based sentence boundary detection

doc = nlp(text)

sents = [sent.text.strip() for sent in doc.sents if sent.text.strip()]

return chunk_by_sentences_nltk(" ".join(sents), max_tokens=max_tokens, overlap_sentences=1)

Recursive separator chunking (LangChain)

# pip install langchain-text-splitters

from langchain_text_splitters import RecursiveCharacterTextSplitter

def chunk_recursive_langchain(

text: str,

*,

chunk_size: int = 1200,

chunk_overlap: int = 150,

) -> list[Chunk]:

splitter = RecursiveCharacterTextSplitter(

chunk_size=chunk_size,

chunk_overlap=chunk_overlap,

length_function=token_len, # token-aware budgeting

separators=["\n\n", "\n", ". ", " ", ""], # customise for your content (e.g., code)

)

pieces = splitter.split_text(text)

return [

Chunk(text=p, meta={"strategy": "recursive_langchain", "chunk_size": chunk_size, "overlap": chunk_overlap})

for p in pieces

]

Semantic chunking with embedding similarity (OpenAI embeddings)

This approach computes embeddings for candidate units (sentences/paragraphs), then finds semantic “breakpoints”.

# pip install openai numpy

import os

import numpy as np

from openai import OpenAI

client = OpenAI(api_key=os.getenv("OPENAI_API_KEY", "YOUR_OPENAI_API_KEY"))

def embed_texts_openai(texts: list[str], *, model: str = "text-embedding-3-small") -> np.ndarray:

# NOTE: for large batches, respect request token limits and batch size limits.

resp = client.embeddings.create(model=model, input=texts)

embs = np.array([d.embedding for d in resp.data], dtype=np.float32)

return embs

def cosine_sim(a: np.ndarray, b: np.ndarray) -> float:

denom = (np.linalg.norm(a) * np.linalg.norm(b)) + 1e-8

return float(np.dot(a, b) / denom)

def chunk_semantic(

text: str,

*,

max_tokens: int = 800,

breakpoint_threshold: float = 0.70,

) -> list[Chunk]:

# 1) Start from sentence candidates

sents = [s.strip() for s in sent_tokenize(text) if s.strip()]

if len(sents) <= 1:

return [Chunk(text=text, meta={"strategy": "semantic", "note": "no_split"})]

# 2) Embed each sentence

embs = embed_texts_openai(sents)

# 3) Compute similarity drops

sims = [cosine_sim(embs[i], embs[i + 1]) for i in range(len(sents) - 1)]

# 4) Create segments at breakpoints

out: list[Chunk] = []

buf: list[str] = []

buf_tokens = 0

for i, s in enumerate(sents):

# Add sentence

s_tok = token_len(s)

if buf and buf_tokens + s_tok > max_tokens:

out.append(Chunk(text=" ".join(buf), meta={"strategy": "semantic", "reason": "max_tokens"}))

buf, buf_tokens = [], 0

buf.append(s)

buf_tokens += s_tok

# Decide breakpoint after sentence i (based on similarity to next)

if i < len(sims) and sims[i] < breakpoint_threshold:

out.append(

Chunk(

text=" ".join(buf),

meta={"strategy": "semantic", "reason": "sim_drop", "sim_to_next": sims[i]},

)

)

buf, buf_tokens = [], 0

if buf:

out.append(Chunk(text=" ".join(buf), meta={"strategy": "semantic", "reason": "final"}))

return out

Hierarchical chunking + merging retrieval (LlamaIndex)

# pip install llama-index llama-index-llms-openai

from llama_index.core import Document, StorageContext, VectorStoreIndex

from llama_index.core.node_parser import HierarchicalNodeParser, get_leaf_nodes

from llama_index.core.retrievers import AutoMergingRetriever

from llama_index.core.storage.docstore import SimpleDocumentStore

def build_hierarchical_index(text: str):

docs = [Document(text=text)]

node_parser = HierarchicalNodeParser.from_defaults() # defaults to coarse-to-fine sizes

nodes = node_parser.get_nodes_from_documents(docs)

docstore = SimpleDocumentStore()

docstore.add_documents(nodes)

storage_context = StorageContext.from_defaults(docstore=docstore)

leaf_nodes = get_leaf_nodes(nodes)

base_index = VectorStoreIndex(leaf_nodes, storage_context=storage_context)

base_retriever = base_index.as_retriever(similarity_top_k=6)

retriever = AutoMergingRetriever(base_retriever, storage_context, verbose=True)

return retriever

Element-based chunking for PDFs (Docling)

# pip install docling

# NOTE: PDF parsing quality depends on your environment (fonts, OCR, etc.).

from docling.document_converter import DocumentConverter

from docling.transforms.chunker.hierarchical_chunker import HierarchicalChunker

def chunk_pdf_docling(pdf_path: str) -> list[Chunk]:

converter = DocumentConverter()

doc = converter.convert(pdf_path).document # DoclingDocument

chunker = HierarchicalChunker()

doc_chunks = list(chunker.chunk(doc))

out: list[Chunk] = []

for c in doc_chunks:

# c.text contains serialised chunk content; c.meta carries structure info

out.append(Chunk(text=c.text, meta={"strategy": "docling_hierarchical", **dict(c.meta)}))

return out

Code-aware chunking for Python (AST)

import ast

def chunk_python_by_ast(code: str, *, filepath: str = "<memory>") -> list[Chunk]:

tree = ast.parse(code)

out: list[Chunk] = []

for node in tree.body:

if isinstance(node, (ast.FunctionDef, ast.AsyncFunctionDef, ast.ClassDef)):

start = getattr(node, "lineno", None)

end = getattr(node, "end_lineno", None)

if start is None or end is None:

continue

lines = code.splitlines()

snippet = "\n".join(lines[start - 1 : end])

kind = "class" if isinstance(node, ast.ClassDef) else "function"

name = getattr(node, "name", "<anon>")

out.append(

Chunk(

text=snippet,

meta={

"strategy": "python_ast",

"kind": kind,

"name": name,

"filepath": filepath,

"start_line": start,

"end_line": end,

},

)

)

return out

Indexing examples

FAISS (local) — minimal, fast baseline

# pip install faiss-cpu numpy

import numpy as np

import faiss

def build_faiss_index(vectors: np.ndarray) -> faiss.Index:

# vectors: shape (N, d), dtype float32

d = vectors.shape[1]

index = faiss.IndexFlatIP(d) # inner-product; cos sim if vectors are normalised

faiss.normalize_L2(vectors)

index.add(vectors)

return index

def faiss_search(index: faiss.Index, query_vec: np.ndarray, k: int = 5):

q = query_vec.astype(np.float32).reshape(1, -1)

faiss.normalize_L2(q)

scores, ids = index.search(q, k)

return ids[0].tolist(), scores[0].tolist()

Chroma (local) — simple persistence for RAG prototyping

# pip install chromadb

import chromadb

def build_chroma_collection(chunks: list[Chunk], embeddings: np.ndarray, *, path: str = "./chroma_store"):

client = chromadb.PersistentClient(path=path)

col = client.get_or_create_collection(name="docs")

ids = [sha1_id(c.meta.get("strategy", "chunk"), str(i), c.text[:50]) for i, c in enumerate(chunks)]

col.upsert(

ids=ids,

documents=[c.text for c in chunks],

metadatas=[c.meta for c in chunks],

embeddings=embeddings.tolist(),

)

return col

Weaviate (self-hosted / cloud) — bring-your-own vectors

# pip install weaviate-client

import weaviate

from weaviate.classes.config import Configure

def weaviate_upsert_self_provided(

chunks: list[Chunk],

embeddings: np.ndarray,

):

client = weaviate.connect_to_local() # or connect_to_weaviate_cloud(...)

try:

collection = client.collections.create(

name="Chunk",

vector_config=Configure.Vectors.self_provided(), # you provide vectors

)

with collection.batch.dynamic() as batch:

for c, v in zip(chunks, embeddings):

batch.add_object(

properties={"text": c.text, **{f"m_{k}": str(vv) for k, vv in c.meta.items()}},

vector=v.tolist(),

)

# Query by near-vector

q = embeddings[0]

res = collection.query.near_vector(near_vector=q.tolist(), limit=5)

for obj in res.objects:

print(obj.properties.get("text")[:200])

finally:

client.close()

Some Source Docs

- Patrick Lewis et al., “Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks”, NeurIPS 2020; arXiv:2005.11401.- Vladimir Karpukhin et al., “Dense Passage Retrieval for Open-Domain Question Answering”, EMNLP 2020; arXiv:2004.04906.

- OpenAI API Reference: Create embeddings (

/v1/embeddings) — token limits (8192 per input; 300k total per request) anddimensionsparameter. - OpenAI Batch API guide + OpenAI API pricing page (Batch saves ~50% with 24h turnaround).

- LangChain docs: RecursiveCharacterTextSplitter and splitters integration guide (chunk size/overlap, recursive separator hierarchy).

- LlamaIndex docs: HierarchicalNodeParser and AutoMergingRetriever (coarse-to-fine nodes; retrieval-time merging).

- Weaviate blog: “Chunking Strategies to Improve LLM RAG Pipeline Performance” (LLM-based chunking description and trade-offs).

- Docling docs: HierarchicalChunker creates chunks from document element structure and attaches headers/captions metadata.

- Jimeno Yepes et al., “Financial Report Chunking for Effective Retrieval Augmented Generation” (arXiv:2402.05131v3, 2024). Marti A. Hearst, “TextTiling: Segmenting Text into Multi-Paragraph Subtopic Passages”, Computational Linguistics, 1997 (ACL Anthology: J97-1003).

- FAISS documentation / GitHub repository: trade-offs among search time, quality, memory; optional GPU support.

- Nandan Thakur et al., “BEIR: A Heterogeneous Benchmark for Zero-shot Evaluation of Information Retrieval Models”, NeurIPS 2021; arXiv:2104.08663.

- RAGAS documentation: “Faithfulness” metric and related RAG evaluation metrics.

- NLTK documentation:

nltk.tokenize.sent_tokenize- Punkt-based recommended sentence tokenizer. - spaCy API docs:

Sentencizer- rule-based sentence boundary detection without dependency parsing.

Other Useful links

- Advanced RAG: LongRAG, Self-RAG and GraphRAG Explained

- Vector Stores for RAG Comparison

- Reranking with embedding models

- Reranking text documents with Ollama and Qwen3 Embedding model - in Go

- Reranking text documents with Ollama and Qwen3 Reranker model - in Go

- Self-Hosting Cognee (LLM Memory): LLM Performance Tests

- LLM Hosting: Local, Self-Hosted & Cloud Infrastructure Compared

- LLM Performance: Benchmarks, Bottlenecks & Optimization

- Retrieval-Augmented Generation (RAG) Tutorial: Architecture, Implementation, and Production Guide