Observability for LLM Systems: Metrics, Traces, Logs, and Testing in Production

End-to-end observability strategy for LLM inference and LLM applications

LLM systems fail in ways that traditional API monitoring cannot surface — queues fill silently, GPU memory saturates long before CPU looks busy, and latency blows up at the batching layer rather than the application layer. This guide covers an end-to-end observability strategy for LLM inference and LLM applications: what to measure, how to instrument it with Prometheus, OpenTelemetry, and Grafana, and how to deploy the telemetry pipeline at scale.

TL;DR (Executive summary)

LLM systems degrade in ways that classic “HTTP latency + error rate” monitoring cannot explain. Production-grade Observability for LLM systems must answer, quickly and defensibly:

- Whether user experience is degrading (tail latency, time-to-first-token, inter-token latency, errors and aborts).

- Where time is spent (queueing vs batching vs model execution; retrieval/tools/safety filters vs inference).

- What saturates first (GPU utilisation and memory pressure, KV-cache/queue pressure, CPU tokenisation).

- How cost and capacity drift (tokens per request, tokens/sec per GPU, cache hit rate, wasted generations).

- Whether telemetry is safe to store (prompts can contain PII; prevent sensitive leakage into logs/attributes).

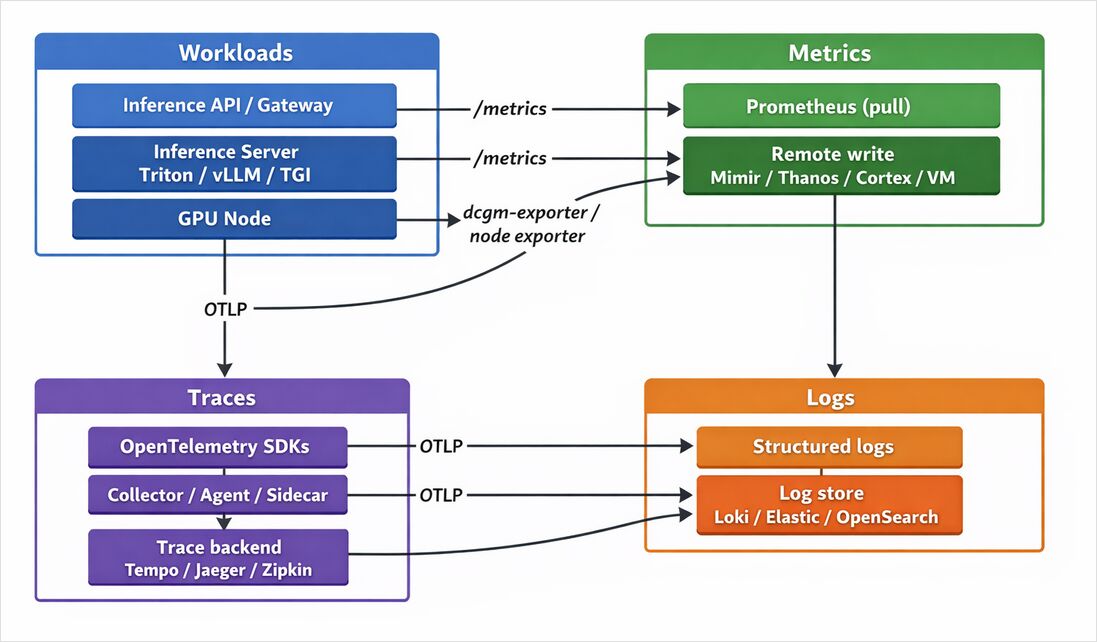

The most resilient design is a multi-signal pipeline:

- Metrics for fast detection and capacity planning (Prometheus + PromQL; optional long-term storage via Thanos/Cortex/Mimir/VictoriaMetrics).

- Traces for request-level causality (OpenTelemetry with OTLP; backends such as Tempo/Jaeger/Zipkin/Elastic APM).

- Logs for context, correlated to traces (Loki/Elastic/OpenSearch), designed for low-cardinality metadata.

- Profiling for CPU/memory hotspots and tail latency (Grafana Pyroscope).

- Synthetic + load tests to detect regressions before users do (Grafana k6; blackbox-style probing).

- SLOs to measure user outcomes and drive actionable alerting (error budgets; burn-rate style).

What makes observability for LLM systems different

Scope note: the target LLM framework is unspecified. Examples in this article cover common servers/frameworks (Triton, vLLM, TGI, LangChain/LangSmith) and remain applicable to other stacks by substituting equivalent metrics and spans.

LLMs introduce operational behaviours that differ from conventional web services:

- Variable work per request: token counts (input/output) vary widely, so “requests per second” can look stable while token throughput collapses. TGI and vLLM explicitly export token- and token-latency-related telemetry to support this style of monitoring.

- Queueing + continuous batching: throughput depends on batching/queue disciplines; queue size and batch size become first-class indicators (TGI exposes both).

- Streaming UX: users care about TTFT and inter-token latency at least as much as full response time; OpenTelemetry even standardises server TTFT/time-per-token metrics under GenAI semantic conventions.

- GPU pressure dominates failure modes: GPU utilisation and GPU memory (including memory used) are central to reliability; NVIDIA’s DCGM exporter exists specifically to expose GPU telemetry at a Prometheus

/metricsendpoint. - Multi-step pipelines: retrieval, tool calls, safety filters, and post-processing mean end-to-end latency is a composition of multiple spans/queues—making distributed tracing and careful metric design essential.

Concrete examples from popular inference servers highlight this:

- NVIDIA Triton Inference Server exposes metrics as plain text via

/metrics(commonly:8002/metrics) and provides flags to enable/disable metrics and select a metrics port. - vLLM exposes an extensive Prometheus

/metricsendpoint with avllm:prefix; its documentation includes counters for generation tokens and histograms such as time to first token. - Hugging Face TGI documents a

/metricsendpoint with queue size, batch size, end-to-end request duration, generated tokens, and queue duration.

Core observability tasks and required LLM telemetry

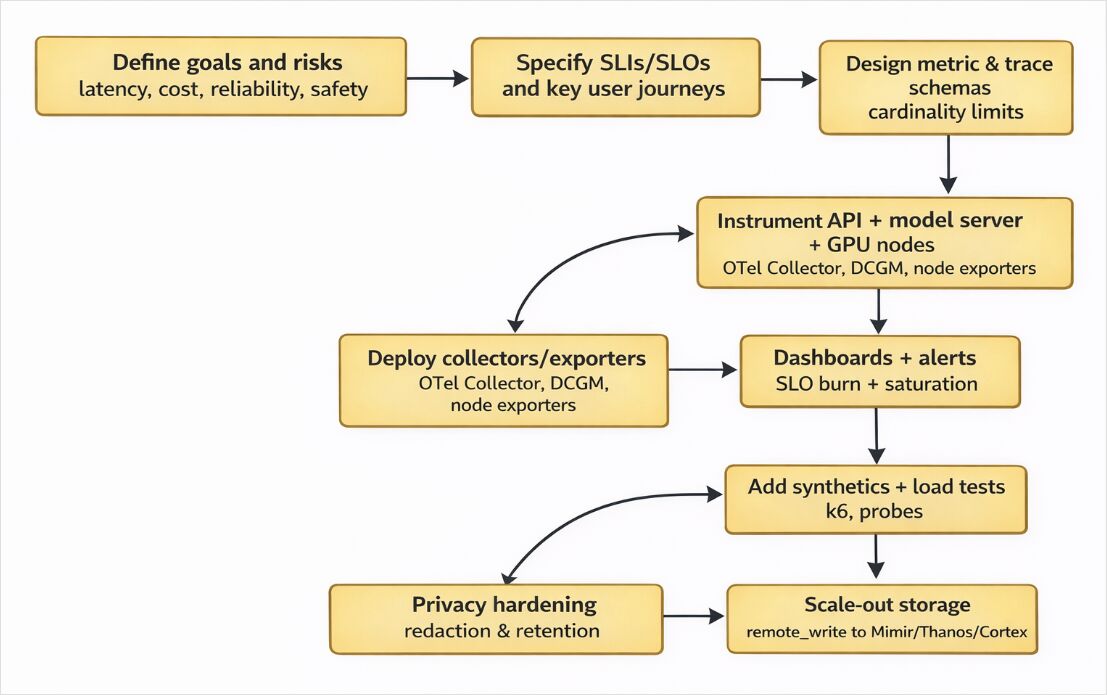

Observability for LLM systems is easiest to implement when you map tasks → signals → tools, and then constrain cardinality and sampling from day one.

Metrics: For online-serving systems, Prometheus’ own instrumentation guidance highlights query count, errors, and latency as key metrics; LLMs expand this with TTFT, per-token throughput/latency, queue length, batch size, and GPU utilisation.

Traces: Traces are how you attribute latency and failures across retrieval/tools/safety/inference stages; OpenTelemetry frames traces/exporters as a vendor-neutral way to emit and send telemetry to collectors or backends.

Logs: Logs provide human-readable context and “why”, but only remain usable at scale if you avoid indexing unbounded values (example: Loki indexes labels only and stores compressed log chunks in object storage).

Profiling: Continuous profiling captures production CPU/memory behaviour with low-overhead sampling; Grafana Pyroscope is explicitly positioned for this.

Synthetic tests and load tests: Grafana k6 is an open-source load testing tool, and Grafana notes Synthetic Monitoring is powered by k6 and extends beyond simple protocol checks.

SLOs: Google’s SRE guidance defines an SLO as a target value/range for a service level measured by an SLI, and provides guidance for alerting on SLOs (precision/recall/detection time trade-offs).

Key LLM metrics blueprint

| Category | Example metric names (real examples) | Type | Why it matters | Example sources |

|---|---|---|---|---|

| End-to-end latency | tgi_request_duration |

Histogram | Tail latency is the user experience | TGI exports this explicitly |

| Time-to-first-token | vllm:time_to_first_token_seconds ; gen_ai.server.time_to_first_token |

Histogram | Streaming/delayed-first-token is often first sign of saturation | vLLM and OTel semconv GenAI |

| Time per output token | tgi_request_mean_time_per_token_duration ; gen_ai.server.time_per_output_token |

Histogram | Inter-token latency; “feels slow” even if request completes | TGI and OTel semconv GenAI |

| Token usage/volume | tgi_request_generated_tokens ; gen_ai.client.token.usage |

Histogram / Counter | Cost + capacity are token-driven | TGI and OTel semconv GenAI |

| Requests | tgi_request_count ; vllm:request_success_total |

Counter | Traffic baseline and outcomes | TGI and vLLM |

| Queue length | tgi_queue_size |

Gauge | Queueing predicts latency blowups | TGI |

| Batch size and batch limits | tgi_batch_current_size ; tgi_batch_current_max_tokens |

Gauge | Throughput–latency trade-offs | TGI |

| GPU utilisation/memory | DCGM_* (exporter-provided) |

Gauge | Saturation, OOM risk, scaling trigger | DCGM exporter exposes GPU metrics at /metrics |

| Inference server telemetry endpoint | :8002/metrics (Triton default in docs/archives) |

— | Standard scrape target for Prometheus | Triton docs |

OpenTelemetry GenAI semantic conventions for standardisation

OpenTelemetry provides GenAI semantic conventions (status: “Development”) with standard naming for GenAI metrics such as:

gen_ai.client.token.usageandgen_ai.client.operation.durationgen_ai.server.request.duration,gen_ai.server.time_per_output_token, andgen_ai.server.time_to_first_token

This standardisation is a practical lever for portable “monitoring LLM models with OpenTelemetry” strategies: emit once and route the same telemetry to OSS or vendor backends later.

Designing the telemetry pipeline

Pull vs push

Prometheus is pull-first. Processes expose metrics in a supported exposition format, and Prometheus scrapes them according to configured scrape jobs.

Push is for exceptions. Prometheus’ “When to use the Pushgateway” guide explicitly recommends the Pushgateway only in limited cases (not as a general push replacement), and the Pushgateway README emphasises it cannot “turn Prometheus into a push-based monitoring system”.

LLM-specific practical pattern:

- Use pull for inference servers/exporters (Triton/vLLM/TGI metrics endpoints; DCGM exporter; node metrics).

- Use OTLP push for traces/logs/OTel metrics (OpenTelemetry Protocol defines transport/encoding/delivery between sources, collectors, and backends).

- Use remote write when scaling beyond a single Prometheus (Prometheus provides remote write tuning guidance; Mimir/Thanos/Cortex provide long-term and/or HA storage options).

Agent vs sidecar vs gateway collectors

OpenTelemetry documents an agent deployment pattern, where telemetry is sent to a Collector running alongside the application or on the same host (sidecar/DaemonSet), then exported.

For Kubernetes, sidecar injection is supported via the OpenTelemetry Operator (annotation-based injection).

Pragmatic rule-of-thumb for LLM stacks:

- Use a DaemonSet agent for host-level enrichment and shared pipelines across many pods.

- Use a sidecar when you need strict per-workload isolation or dedicated local filtering (common when prompts may contain sensitive data).

- Use a gateway collector for centralised tail sampling, batching, retries, and export fan-out.

Sampling and cardinality control

OpenTelemetry clarifies that tail sampling allows sampling decisions using criteria derived from a trace (not possible with head sampling alone).

Prometheus’ instrumentation guidance warns against label overuse, provides a rule-of-thumb to keep cardinality low, and advises redesigning metrics if potential cardinality exceeds ~100.

LLM-specific “cardinality traps” to ban early:

- Prompt text, response text, conversation IDs, request IDs as labels/attributes.

- Tool argument blobs as span attributes.

- Unbounded “user_id” labels.

Prefer bounded dimensions: model, model_family, endpoint, region, status_code, deployment, tenant (only if bounded).

LLM observability tools comparison

Tools mapped to observability tasks

| Tool | Metrics | Traces | Logs | Profiling | Synthetic tests | SLOs / alerting | LLM relevance |

|---|---|---|---|---|---|---|---|

| Prometheus | ✅ | ◻️ | ◻️ | ◻️ | ◻️ | ✅ | Instrumentation guidance + alerting model; pull-based scraping |

| Grafana | ✅ (viz) | ✅ (viz) | ✅ (viz) | ✅ | ✅ | ✅ | Dashboards are panels over data sources; supports broad data sources |

| OpenTelemetry | ✅ | ✅ | ✅ | ✅ (profiles evolving) | ◻️ | ◻️ | OTLP spec + GenAI semantic conventions; vendor-neutral instrumentation |

| Jaeger | ◻️ | ✅ | ◻️ | ◻️ | ◻️ | ◻️ | Accepts OTLP (gRPC/HTTP) and is a common tracing backend |

| Grafana Tempo | ◻️ | ✅ | ◻️ | ◻️ | ◻️ | ◻️ | High-scale tracing; can generate metrics from spans via metrics-generator |

| Grafana Loki | ◻️ | ◻️ | ✅ | ◻️ | ◻️ | ◻️ | Indexes labels only; stores compressed chunks; reduces log cost at scale |

| Elastic Stack (ELK) | ✅ | ✅ | ✅ | ◻️ | ◻️ | ✅ | Elastic Stack lists Elasticsearch + Kibana foundations; Elastic APM supports OTel integration |

| DCGM exporter | ✅ | ◻️ | ◻️ | ◻️ | ◻️ | ◻️ | GPU metrics exporter exposing /metrics scrape endpoint |

| Mimir / Thanos / Cortex | ✅ | ◻️ | ◻️ | ◻️ | ◻️ | ◻️ | Long-term/HA Prometheus-compatible metrics storage |

| Datadog | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | Accepts OTel traces/metrics/logs; includes sensitive data scanning features |

| New Relic | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | Documents OTLP endpoint configuration and supported OTLP/HTTP practices |

| Honeycomb | ✅ | ✅ | ✅ | ◻️ | ◻️ | ✅ | Supports receiving OTLP over gRPC/HTTP; OTel-first ingestion |

| LangSmith | ◻️ | ✅ | ◻️ | ◻️ | ◻️ | ◻️ | Supports OpenTelemetry-based tracing for LLM apps |

Grafana vs alternatives for visualisation

- Grafana dashboards are composed of panels that query data sources (including Loki and Mimir) to produce charts and visualisations.

- Kibana provides dashboards/visualisations as the UI layer within the Elastic Stack.

- OpenSearch Dashboards provides data visualisation tooling for OpenSearch.

- InfluxData’s documentation positions Chronograf as the visualisation component within the Influx ecosystem.

Prometheus vs alternatives for metrics backends

- Prometheus local storage defaults: if retention flags aren’t set, retention defaults to 15d (plan retention/cost early).

- Grafana Mimir is described as horizontally scalable, HA, multi-tenant long-term storage for Prometheus and OpenTelemetry metrics.

- Thanos is described as a highly available Prometheus setup with long-term storage capabilities.

- Cortex describes itself as a horizontally scalable, HA, multi-tenant long-term storage solution for Prometheus and OpenTelemetry metrics.

- VictoriaMetrics Cloud documents Prometheus remote write integration for long-term storage.

- Amazon Managed Service for Prometheus describes a managed offering that scales with ingestion/query needs and supports PromQL and remote write.

Practical implementation cookbook

Metric names and types to implement today

OpenTelemetry GenAI semantic conventions (status: Development) define metric names you can standardise on immediately:

gen_ai.client.token.usagegen_ai.client.operation.durationgen_ai.server.request.durationgen_ai.server.time_per_output_tokengen_ai.server.time_to_first_token

Server-side examples you can scrape right away:

- vLLM’s Prometheus endpoint includes counters (e.g., generation tokens total) and histograms (TTFT) and documents a

model_namelabel strategy. - TGI documents metrics including queue size, request duration, generated tokens, and mean time per token.

- Triton documents

/metricsexposure and metric toggles.

PromQL examples for LLM latency and throughput dashboards

# p95 end-to-end latency for an application histogram

histogram_quantile(

0.95,

sum(rate(llm_request_latency_seconds_bucket[5m])) by (le, model)

)

# Error rate percentage (5xx)

100 *

(

sum(rate(llm_requests_total{status_code=~"5.."}[5m]))

/

sum(rate(llm_requests_total[5m]))

)

# Tokens/sec (output) across all models

sum(rate(llm_tokens_total{direction="out"}[5m]))

# TGI queue size (gauge)

max(tgi_queue_size) by (instance)

# vLLM TTFT p95

histogram_quantile(

0.95,

sum(rate(vllm:time_to_first_token_seconds_bucket[5m])) by (le, model_name)

)

Prometheus’ histogram guidance explains that histogram quantiles are computed server-side from buckets using histogram_quantile().

OpenTelemetry instrumentation notes for LLM systems

- OTLP is the OpenTelemetry Protocol specifying how telemetry is encoded/transmitted between sources, collectors, and backends.

- OpenTelemetry SDK configuration documents environment variables such as

OTEL_EXPORTER_OTLP_ENDPOINT(and protocol options) for exporting telemetry. - OpenTelemetry Python contrib documents FastAPI instrumentation support for automatic and manual instrumentation.

- GenAI semantic conventions include an opt-in stability mechanism via

OTEL_SEMCONV_STABILITY_OPT_INfor GenAI conventions migration.

Short Python example: metrics + traces + logs

The snippet below demonstrates:

- Prometheus metrics exposition (

/metrics) for “monitoring LLM inference with Prometheus” - OpenTelemetry traces exported via OTLP (vendor-neutral)

- Structured logs correlated with trace context, with a privacy-safe default (don’t log raw prompts)

import logging

import time

from fastapi import FastAPI, Request

from pydantic import BaseModel

# Prometheus (pull-based metrics)

from prometheus_client import Counter, Histogram, generate_latest, CONTENT_TYPE_LATEST

from starlette.responses import Response

# OpenTelemetry (OTLP traces)

from opentelemetry import trace

from opentelemetry.instrumentation.fastapi import FastAPIInstrumentor

from opentelemetry.sdk.resources import Resource

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

app = FastAPI(title="LLM Inference API", version="1.0.0")

FastAPIInstrumentor.instrument_app(app)

# --- Logging (privacy-safe default) ---

logger = logging.getLogger("llm")

logging.basicConfig(level=logging.INFO, format="%(message)s")

def trace_id_hex() -> str:

span = trace.get_current_span()

ctx = span.get_span_context()

return format(ctx.trace_id, "032x") if ctx.is_valid else ""

# --- Prometheus metrics ---

LLM_REQUESTS = Counter(

"llm_requests_total",

"LLM requests total",

["route", "status_code", "model"],

)

LLM_LATENCY = Histogram(

"llm_request_latency_seconds",

"End-to-end LLM request latency (seconds)",

["route", "model"],

buckets=(0.1, 0.2, 0.35, 0.5, 0.75, 1, 1.5, 2, 3, 5, 8, 13),

)

# --- OpenTelemetry tracer provider ---

resource = Resource.create({"service.name": "llm-inference-api"})

trace.set_tracer_provider(TracerProvider(resource=resource))

trace.get_tracer_provider().add_span_processor(

BatchSpanProcessor(OTLPSpanExporter()) # configure via OTEL_EXPORTER_OTLP_* env vars

)

tracer = trace.get_tracer(__name__)

class GenerateRequest(BaseModel):

prompt: str

model: str = "unspecified"

max_tokens: int = 256

class GenerateResponse(BaseModel):

model: str

output: str

latency_ms: int

@app.post("/v1/generate", response_model=GenerateResponse)

async def generate(req: GenerateRequest, request: Request):

route = "/v1/generate"

start = time.perf_counter()

with tracer.start_as_current_span("llm.generate") as span:

# Avoid logging the full prompt; emit safe metadata

span.set_attribute("gen_ai.request.model", req.model)

span.set_attribute("gen_ai.request.max_tokens", req.max_tokens)

# Replace with actual LLM call (Triton/vLLM/TGI client)

time.sleep(0.15)

output = "Hello from the model."

latency_s = time.perf_counter() - start

LLM_LATENCY.labels(route=route, model=req.model).observe(latency_s)

LLM_REQUESTS.labels(route=route, status_code="200", model=req.model).inc()

logger.info(

{

"msg": "llm_request_complete",

"trace_id": trace_id_hex(),

"model": req.model,

"latency_ms": int(latency_s * 1000),

# Do NOT include raw prompt/output unless policy allows it.

}

)

return GenerateResponse(model=req.model, output=output, latency_ms=int(latency_s * 1000))

@app.get("/metrics")

def metrics():

return Response(generate_latest(), media_type=CONTENT_TYPE_LATEST)

Deployment, scaling, security, and troubleshooting

Deployment options

| Deployment option | Best for | Trade-offs |

|---|---|---|

| Kubernetes + kube-prometheus-stack (Helm) | Standardised cluster monitoring bundle (Prometheus Operator, dashboards, rules) | CRDs/operator lifecycle management |

| Kubernetes + OpenTelemetry Collector (DaemonSet/sidecar) | Standardised OTLP pipelines; local sensitive filtering | Needs sampling/limit tuning |

| Docker Compose | Rapid prototyping on a single host | Not HA; storage is manual |

| systemd / VM installs | Bare-metal GPU fleets and traditional ops | Manual discovery and config |

| Managed services (Grafana Cloud / Datadog / New Relic / AMP) | Fast time-to-value; managed scaling | Cost and governance; vendor lock-in trade-offs |

Scaling and retention: practical constraints

- Prometheus local storage: without explicit size/time flags, the retention time defaults to 15d.

- Prometheus remote write: Prometheus documents remote write tuning for scaling beyond “sane defaults”.

- Grafana Tempo: positioned as a high-scale tracing backend and can generate metrics from spans using the metrics-generator (remote writes to a Prometheus data source).

- Loki storage: Loki’s docs emphasise label-only indexing and compressed chunk storage (object storage), making label strategy central to scale and cost.

Security and privacy: prompts can contain PII

OpenTelemetry’s security guidance stresses that telemetry collection can inadvertently capture sensitive/personal information; you are responsible for handling it appropriately.

Prometheus’ security model warns that Prometheus endpoints should not be exposed to publicly accessible networks (like the internet) because they serve information about monitored systems.

Operational privacy controls that keep “observability for LLM systems” safe:

- Default to not logging raw prompts/responses; log token counts, model name, latency, and trace IDs instead.

- Redact/drop sensitive attributes in collectors/pipelines (Collector-level filtering is a common approach across ecosystems).

- Enforce RBAC and retention policies for logs/traces; consider sensitive-data scanners where appropriate (e.g., vendors document scanners for telemetry).

Troubleshooting checklist

If your Grafana dashboard for LLM latency looks wrong, debug in this order:

- Ingestion health

- Prometheus: validate scrape success and config semantics (Prometheus configuration defines scrape jobs/instances).

- OTLP: confirm exporter endpoint configuration (SDKs use

OTEL_EXPORTER_OTLP_ENDPOINT, protocol settings).

- Schema mismatch

- Dashboard expects

model, but your server emitsmodel_name(vLLM explicitly documentsmodel_namelabels).

- Dashboard expects

- Cardinality explosion

- Someone labelled by request IDs/prompt hashes; Prometheus warns labelsets increase RAM/CPU/disk/network costs and provides cardinality guidance.

- Histogram misuse

- Ensure you compute quantiles from

_bucketseries withrate()andle; Prometheus explains histogram quantile calculation trade-offs.

- Ensure you compute quantiles from

- Trace sampling gaps

- If you head-sample too aggressively, rare slow/error traces disappear; tail sampling retains “important” traces based on full trace criteria.

- Tempo span-metrics issues

- If using Tempo metrics-generator and span-metrics, confirm it is enabled and tuned (Tempo documents metrics-generator and span-metrics processors; troubleshooting exists for generator issues).

- GPU metrics absent

- Confirm DCGM exporter is deployed and

/metricsis reachable (DCGM exporter exposes GPU metrics over HTTP for Prometheus).

- Confirm DCGM exporter is deployed and

Useful links

- Observability: Monitoring, Metrics, Prometheus & Grafana Guide

- Prometheus Monitoring: Setup & Best Practices

- LLM Performance: Benchmarks, Bottlenecks & Optimization

- LLM Hosting: Local, Self-Hosted & Cloud Infrastructure Compared

- RAG Step-by-Step Tutorial

- Prometheus configuration docs

- Prometheus exposition formats

- Prometheus instrumentation best practices

- Prometheus metric naming

- Prometheus histograms and summaries

- Prometheus alerting rules

- Prometheus alerting overview

- Alertmanager configuration

- Grafana dashboard JSON model

- Grafana provisioning

- NVIDIA Triton Inference Server metrics

- TorchServe metrics API

- NVIDIA DCGM exporter

- kube-prometheus-stack Helm chart

- Prometheus Operator getting started

- OpenTelemetry Prometheus exporter spec

- Prometheus guide for receiving OTLP

- LangSmith tracing with OpenTelemetry