Claude, OpenClaw, and the End of Flat Pricing for Agents

Claude subscriptions no longer power agents

The quiet loophole that powered a wave of agent experimentation is now closed.

Claude subscriptions no longer power agents

The quiet loophole that powered a wave of agent experimentation is now closed.

Remote Ollama access without public ports

Ollama is at its happiest when it is treated like a local daemon: the CLI and your apps talk to a loopback HTTP API, and the rest of the network never finds out it exists.

Git-based deploys, CDN, credits, and trade-offs.

Netlify is one of the most developer-friendly ways to ship Hugo sites and modern web apps with a production-grade workflow: preview URLs for every pull request, atomic deploys, a global CDN, and optional serverless and edge capabilities.

Pick hosted email for your domain without regret.

Putting email on your own domain sounds like a weekend DNS task. In practice it is a small distributed system with a twenty-year legacy.

Install Kafka 4.2 and stream events in minutes.

Apache Kafka 4.2.0 is the current supported release line, and it’s the best baseline for a modern Quickstart because Kafka 4.x is fully ZooKeeper-free and built around KRaft by default.

OpenCode LLM test — coding and accuracy stats

I have tested how OpenCode works with several locally hosted on Ollama and llama.cpp LLMs, and for comparison added some Free models from OpenCode Zen.

Airtable - Free plan limits, API, webhooks, Go & Python.

Airtable is best thought of as a low‑code application platform built around a collaborative “database-like” spreadsheet UI - excellent for rapidly creating operational tooling (internal trackers, lightweight CRMs, content pipelines, AI evaluation queues) where non-developers need a friendly interface, but developers also need an API surface for automation and integration.

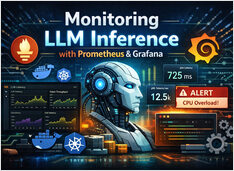

Monitor LLM with Prometheus and Grafana

LLM inference looks like “just another API” — until latency spikes, queues back up, and your GPUs sit at 95% memory with no obvious explanation.

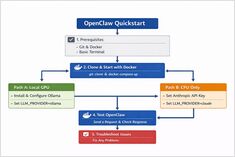

Install OpenClaw locally with Ollama

OpenClaw is a self-hosted AI assistant designed to run with local LLM runtimes like Ollama or with cloud-based models such as Claude Sonnet.

AWS S3, Garage, or MinIO - overview and comparison.

AWS S3 remains the “default” baseline for object storage: it is fully managed, strongly consistent, and designed for extremely high durability and availability.

Garage and MinIO are self-hosted, S3-compatible alternatives: Garage is designed for lightweight, geo-distributed small-to-medium clusters, while MinIO emphasises broad S3 API feature coverage and high performance in larger deployments.

End-to-end observability strategy for LLM inference and LLM applications

LLM systems fail in ways that traditional API monitoring cannot surface — queues fill silently, GPU memory saturates long before CPU looks busy, and latency blows up at the batching layer rather than the application layer.

This guide covers an end-to-end observability strategy for LLM inference and LLM applications: what to measure, how to instrument it with Prometheus, OpenTelemetry, and Grafana, and how to deploy the telemetry pipeline at scale.

Create CloudFront pay-as-you-go via AWS CLI.

The AWS Free plan is not working for me and Pay-as-you-go is hidden for new CloudFront Distributions on AWS Console .

Control data and models with self-hosted LLMs

Self-hosting LLMs keeps data, models, and inference under your control-a practical path to AI sovereignty for teams, enterprises, nations.

Automate Hugo deployment to AWS S3

Deploying a Hugo static site to AWS S3 using the AWS CLI provides a robust, scalable solution for hosting your website. This guide covers the complete deployment process, from initial setup to advanced automation and cache management strategies.

For broader context on web infrastructure topics, see the web-infrastructure cluster.

Optimize developing and running Hugo sites

Hugo caching strategies are essential for maximizing the performance of your static site generator. While Hugo generates static files that are inherently fast, implementing proper caching at multiple layers can dramatically improve build times, reduce server load, and enhance user experience.