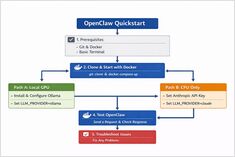

Oh My Opencode Specialised Agents Deep Dive and Model Guide

Meet Sisyphus and its specialist agent crew.

The biggest capability jump in OpenCode comes from specialised agents: deliberate separation of orchestration, planning, execution, and research.