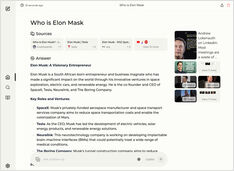

Comparison of Hugo Page Translation quality - LLMs on Ollama

qwen3 8b, 14b and 30b, devstral 24b, mistral small 24b

In this test I’m comparing how different LLMs hosted on Ollama translate Hugo page in English to German. Three pages I tested were on different topics, had some nice markdown with some structure: headers, lists, tables, links, etc.